Pre Trained Computer Vision Models#

Notebook by:

Royi Avital RoyiAvital@fixelalgorithms.com

Revision History#

Version |

Date |

User |

Content / Changes |

|---|---|---|---|

1.0.000 |

26/05/2024 |

Royi Avital |

First version |

# Import Packages

# General Tools

import numpy as np

import scipy as sp

import pandas as pd

# Machine Learning

# Deep Learning

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.utils.tensorboard import SummaryWriter

import torchinfo

import torchvision

from torchvision.transforms import v2 as TorchVisionTrns

# Image Processing & Computer Vision

import skimage as ski

# Miscellaneous

import copy

from enum import auto, Enum, unique

import json

import math

import os

from platform import python_version

import random

import time

import urllib

# Typing

from typing import Any, Callable, Dict, Generator, List, Optional, Self, Set, Tuple, Union

# Visualization

import matplotlib as mpl

import matplotlib.pyplot as plt

import seaborn as sns

# Jupyter

from IPython import get_ipython

from IPython.display import HTML, Image

from IPython.display import display

from ipywidgets import Dropdown, FloatSlider, interact, IntSlider, Layout, SelectionSlider

from ipywidgets import interact

Notations#

(?) Question to answer interactively.

(!) Simple task to add code for the notebook.

(@) Optional / Extra self practice.

(#) Note / Useful resource / Food for thought.

Code Notations:

someVar = 2; #<! Notation for a variable

vVector = np.random.rand(4) #<! Notation for 1D array

mMatrix = np.random.rand(4, 3) #<! Notation for 2D array

tTensor = np.random.rand(4, 3, 2, 3) #<! Notation for nD array (Tensor)

tuTuple = (1, 2, 3) #<! Notation for a tuple

lList = [1, 2, 3] #<! Notation for a list

dDict = {1: 3, 2: 2, 3: 1} #<! Notation for a dictionary

oObj = MyClass() #<! Notation for an object

dfData = pd.DataFrame() #<! Notation for a data frame

dsData = pd.Series() #<! Notation for a series

hObj = plt.Axes() #<! Notation for an object / handler / function handler

Code Exercise#

Single line fill

vallToFill = ???

Multi Line to Fill (At least one)

# You need to start writing

????

Section to Fill

#===========================Fill This===========================#

# 1. Explanation about what to do.

# !! Remarks to follow / take under consideration.

mX = ???

???

#===============================================================#

# Configuration

# %matplotlib inline

seedNum = 512

np.random.seed(seedNum)

random.seed(seedNum)

# Matplotlib default color palette

lMatPltLibclr = ['#1f77b4', '#ff7f0e', '#2ca02c', '#d62728', '#9467bd', '#8c564b', '#e377c2', '#7f7f7f', '#bcbd22', '#17becf']

# sns.set_theme() #>! Apply SeaBorn theme

runInGoogleColab = 'google.colab' in str(get_ipython())

# Improve performance by benchmarking

torch.backends.cudnn.benchmark = True

# Reproducibility (Per PyTorch Version on the same device)

# torch.manual_seed(seedNum)

# torch.backends.cudnn.deterministic = True

# torch.backends.cudnn.benchmark = False #<! Makes things slower

# Constants

FIG_SIZE_DEF = (8, 8)

ELM_SIZE_DEF = 50

CLASS_COLOR = ('b', 'r')

EDGE_COLOR = 'k'

MARKER_SIZE_DEF = 10

LINE_WIDTH_DEF = 2

DATA_FOLDER_PATH = 'Data'

TENSOR_BOARD_BASE = 'TB'

# Based on newer batches of ImageNet data set (Not in the 1.2M for training)

L_IMG_URL = ['https://farm3.static.flickr.com/2278/2096798034_bfe45b11ee.jpg',

'https://static.flickr.com/48/116936482_7458bb78c1.jpg',

'https://farm4.static.flickr.com/3001/2927732866_3bd24c2f98.jpg',

'https://farm4.static.flickr.com/3018/2990729221_aabd592245.jpg',

'https://farm4.static.flickr.com/3455/3372433349_0444709b8f.jpg',

]

IMAGE_NET_CLS_IDX_URL = r'https://raw.githubusercontent.com/FixelAlgorithmsTeam/FixelCourses/master/DataSets/ImageNet1000ClassIndex.json'

# Download Auxiliary Modules for Google Colab

if runInGoogleColab:

!wget https://raw.githubusercontent.com/FixelAlgorithmsTeam/FixelCourses/master/AIProgram/2024_02/DataManipulation.py

!wget https://raw.githubusercontent.com/FixelAlgorithmsTeam/FixelCourses/master/AIProgram/2024_02/DataVisualization.py

!wget https://raw.githubusercontent.com/FixelAlgorithmsTeam/FixelCourses/master/AIProgram/2024_02/DeepLearningPyTorch.py

# Courses Packages

import sys

sys.path.append('/home/vlad/utils')

from DataVisualization import PlotLabelsHistogram, PlotMnistImages

from DeepLearningPyTorch import NNMode

from DeepLearningPyTorch import RunEpoch

# General Auxiliary Functions

Pre Defined Models#

Every Deep Learning framework offers Pre Defined models.

Loading them can be done in 2 flavors:

Model Definition

Loading only the model definition of the architecture.Model Definition with Pre Trained Weights

Loading the model with a pre trained weights on some dataset.

The option (1) is used for a vanilla training of the model.

The option (2) is used in production or for Transfer Learning.

This notebooks presents:

Loading a model with weights trained on ImageNet 1000.

Applying the model on random images from Flickr.

The files are defined inL_IMG_URL.

(#) PyTorch Vision (

TorchVision) offers a set of pretrained models in Models and Pre Trained Weights.(#) There are other sites dedicated to models. It is common to use Model Zoo. Searching for

PyTorch Model Zoowill yield more options.(#) Many papers comes with links to a repository with the model definition and weights.

See YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information.(#) Some repositories only offer the model definition.

See Implementation of MobileNet in PyTorch.(#) For CNN models, the concept of Receptive Field is fundamental.

See Understanding the Receptive Field of Deep Convolutional Networks.

# Parameters

# Data

imgSize = 224

# Model

# Name, Constructor, Weights

lModels = [('AlexNet', torchvision.models.alexnet, torchvision.models.AlexNet_Weights.IMAGENET1K_V1),

('VGG16', torchvision.models.vgg16, torchvision.models.VGG16_Weights.IMAGENET1K_V1),

('InceptionV3', torchvision.models.inception_v3, torchvision.models.Inception_V3_Weights.IMAGENET1K_V1),

('ResNet152', torchvision.models.resnet152, torchvision.models.ResNet152_Weights.IMAGENET1K_V2),

]

# Training

# Visualization

Generate / Load Data#

# Load Data

numImg = len(L_IMG_URL)

# Loads the Classes

fileId = urllib.request.urlopen(IMAGE_NET_CLS_IDX_URL)

dClsData = json.loads(fileId.read())

lClasses = [dClsData[str(k)][1] for k in range(1000)]

Plot the Data#

# Plot the Data

hF, vHa = plt.subplots(nrows = 1, ncols = numImg, figsize = (3 * numImg, 6))

for ii, hA in enumerate(vHa.flat):

mI = ski.io.imread(L_IMG_URL[ii])

hA.imshow(mI)

hA.tick_params(axis = 'both', left = False, top = False, right = False, bottom = False,

labelleft = False, labeltop = False, labelright = False, labelbottom = False)

hA.grid(False)

(?) Do images have the same dimensions? What will be the effect?

# Plot Classes

lClasses[:15]

['tench',

'goldfish',

'great_white_shark',

'tiger_shark',

'hammerhead',

'electric_ray',

'stingray',

'cock',

'hen',

'ostrich',

'brambling',

'goldfinch',

'house_finch',

'junco',

'indigo_bunting']

Show the Models#

This section shows the models using torchinfo.

(#) Since the information is limited to the architecture, no need to load the pre trained weights.

# Show the Model Architecture

tuInShape = (4, 3, 224, 224)

for ii, (modelName, modelClass, _) in enumerate(lModels):

print(f'Displaying the {(ii + 1):02d} model.')

oModel = modelClass()

oModel = oModel.to('cpu')

print(torchinfo.summary(oModel, tuInShape, col_names = ['kernel_size', 'output_size', 'num_params'], device = 'cpu'), end = '\n\n\n\n')

Displaying the 01 model.

===================================================================================================================

Layer (type:depth-idx) Kernel Shape Output Shape Param #

===================================================================================================================

AlexNet -- [4, 1000] --

├─Sequential: 1-1 -- [4, 256, 6, 6] --

│ └─Conv2d: 2-1 [11, 11] [4, 64, 55, 55] 23,296

│ └─ReLU: 2-2 -- [4, 64, 55, 55] --

│ └─MaxPool2d: 2-3 3 [4, 64, 27, 27] --

│ └─Conv2d: 2-4 [5, 5] [4, 192, 27, 27] 307,392

│ └─ReLU: 2-5 -- [4, 192, 27, 27] --

│ └─MaxPool2d: 2-6 3 [4, 192, 13, 13] --

│ └─Conv2d: 2-7 [3, 3] [4, 384, 13, 13] 663,936

│ └─ReLU: 2-8 -- [4, 384, 13, 13] --

│ └─Conv2d: 2-9 [3, 3] [4, 256, 13, 13] 884,992

│ └─ReLU: 2-10 -- [4, 256, 13, 13] --

│ └─Conv2d: 2-11 [3, 3] [4, 256, 13, 13] 590,080

│ └─ReLU: 2-12 -- [4, 256, 13, 13] --

│ └─MaxPool2d: 2-13 3 [4, 256, 6, 6] --

├─AdaptiveAvgPool2d: 1-2 -- [4, 256, 6, 6] --

├─Sequential: 1-3 -- [4, 1000] --

│ └─Dropout: 2-14 -- [4, 9216] --

│ └─Linear: 2-15 -- [4, 4096] 37,752,832

│ └─ReLU: 2-16 -- [4, 4096] --

│ └─Dropout: 2-17 -- [4, 4096] --

│ └─Linear: 2-18 -- [4, 4096] 16,781,312

│ └─ReLU: 2-19 -- [4, 4096] --

│ └─Linear: 2-20 -- [4, 1000] 4,097,000

===================================================================================================================

Total params: 61,100,840

Trainable params: 61,100,840

Non-trainable params: 0

Total mult-adds (Units.GIGABYTES): 2.86

===================================================================================================================

Input size (MB): 2.41

Forward/backward pass size (MB): 15.81

Params size (MB): 244.40

Estimated Total Size (MB): 262.63

===================================================================================================================

Displaying the 02 model.

===================================================================================================================

Layer (type:depth-idx) Kernel Shape Output Shape Param #

===================================================================================================================

VGG -- [4, 1000] --

├─Sequential: 1-1 -- [4, 512, 7, 7] --

│ └─Conv2d: 2-1 [3, 3] [4, 64, 224, 224] 1,792

│ └─ReLU: 2-2 -- [4, 64, 224, 224] --

│ └─Conv2d: 2-3 [3, 3] [4, 64, 224, 224] 36,928

│ └─ReLU: 2-4 -- [4, 64, 224, 224] --

│ └─MaxPool2d: 2-5 2 [4, 64, 112, 112] --

│ └─Conv2d: 2-6 [3, 3] [4, 128, 112, 112] 73,856

│ └─ReLU: 2-7 -- [4, 128, 112, 112] --

│ └─Conv2d: 2-8 [3, 3] [4, 128, 112, 112] 147,584

│ └─ReLU: 2-9 -- [4, 128, 112, 112] --

│ └─MaxPool2d: 2-10 2 [4, 128, 56, 56] --

│ └─Conv2d: 2-11 [3, 3] [4, 256, 56, 56] 295,168

│ └─ReLU: 2-12 -- [4, 256, 56, 56] --

│ └─Conv2d: 2-13 [3, 3] [4, 256, 56, 56] 590,080

│ └─ReLU: 2-14 -- [4, 256, 56, 56] --

│ └─Conv2d: 2-15 [3, 3] [4, 256, 56, 56] 590,080

│ └─ReLU: 2-16 -- [4, 256, 56, 56] --

│ └─MaxPool2d: 2-17 2 [4, 256, 28, 28] --

│ └─Conv2d: 2-18 [3, 3] [4, 512, 28, 28] 1,180,160

│ └─ReLU: 2-19 -- [4, 512, 28, 28] --

│ └─Conv2d: 2-20 [3, 3] [4, 512, 28, 28] 2,359,808

│ └─ReLU: 2-21 -- [4, 512, 28, 28] --

│ └─Conv2d: 2-22 [3, 3] [4, 512, 28, 28] 2,359,808

│ └─ReLU: 2-23 -- [4, 512, 28, 28] --

│ └─MaxPool2d: 2-24 2 [4, 512, 14, 14] --

│ └─Conv2d: 2-25 [3, 3] [4, 512, 14, 14] 2,359,808

│ └─ReLU: 2-26 -- [4, 512, 14, 14] --

│ └─Conv2d: 2-27 [3, 3] [4, 512, 14, 14] 2,359,808

│ └─ReLU: 2-28 -- [4, 512, 14, 14] --

│ └─Conv2d: 2-29 [3, 3] [4, 512, 14, 14] 2,359,808

│ └─ReLU: 2-30 -- [4, 512, 14, 14] --

│ └─MaxPool2d: 2-31 2 [4, 512, 7, 7] --

├─AdaptiveAvgPool2d: 1-2 -- [4, 512, 7, 7] --

├─Sequential: 1-3 -- [4, 1000] --

│ └─Linear: 2-32 -- [4, 4096] 102,764,544

│ └─ReLU: 2-33 -- [4, 4096] --

│ └─Dropout: 2-34 -- [4, 4096] --

│ └─Linear: 2-35 -- [4, 4096] 16,781,312

│ └─ReLU: 2-36 -- [4, 4096] --

│ └─Dropout: 2-37 -- [4, 4096] --

│ └─Linear: 2-38 -- [4, 1000] 4,097,000

===================================================================================================================

Total params: 138,357,544

Trainable params: 138,357,544

Non-trainable params: 0

Total mult-adds (Units.GIGABYTES): 61.94

===================================================================================================================

Input size (MB): 2.41

Forward/backward pass size (MB): 433.81

Params size (MB): 553.43

Estimated Total Size (MB): 989.65

===================================================================================================================

Displaying the 03 model.

/home/vlad/miniconda3/envs/WorkshopCUDAEnv/lib/python3.11/site-packages/torchvision/models/inception.py:43: FutureWarning: The default weight initialization of inception_v3 will be changed in future releases of torchvision. If you wish to keep the old behavior (which leads to long initialization times due to scipy/scipy#11299), please set init_weights=True.

warnings.warn(

===================================================================================================================

Layer (type:depth-idx) Kernel Shape Output Shape Param #

===================================================================================================================

Inception3 -- [4, 1000] 3,326,696

├─BasicConv2d: 1-1 -- [4, 32, 111, 111] --

│ └─Conv2d: 2-1 [3, 3] [4, 32, 111, 111] 864

│ └─BatchNorm2d: 2-2 -- [4, 32, 111, 111] 64

├─BasicConv2d: 1-2 -- [4, 32, 109, 109] --

│ └─Conv2d: 2-3 [3, 3] [4, 32, 109, 109] 9,216

│ └─BatchNorm2d: 2-4 -- [4, 32, 109, 109] 64

├─BasicConv2d: 1-3 -- [4, 64, 109, 109] --

│ └─Conv2d: 2-5 [3, 3] [4, 64, 109, 109] 18,432

│ └─BatchNorm2d: 2-6 -- [4, 64, 109, 109] 128

├─MaxPool2d: 1-4 3 [4, 64, 54, 54] --

├─BasicConv2d: 1-5 -- [4, 80, 54, 54] --

│ └─Conv2d: 2-7 [1, 1] [4, 80, 54, 54] 5,120

│ └─BatchNorm2d: 2-8 -- [4, 80, 54, 54] 160

├─BasicConv2d: 1-6 -- [4, 192, 52, 52] --

│ └─Conv2d: 2-9 [3, 3] [4, 192, 52, 52] 138,240

│ └─BatchNorm2d: 2-10 -- [4, 192, 52, 52] 384

├─MaxPool2d: 1-7 3 [4, 192, 25, 25] --

├─InceptionA: 1-8 -- [4, 256, 25, 25] --

│ └─BasicConv2d: 2-11 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-1 [1, 1] [4, 64, 25, 25] 12,288

│ │ └─BatchNorm2d: 3-2 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-12 -- [4, 48, 25, 25] --

│ │ └─Conv2d: 3-3 [1, 1] [4, 48, 25, 25] 9,216

│ │ └─BatchNorm2d: 3-4 -- [4, 48, 25, 25] 96

│ └─BasicConv2d: 2-13 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-5 [5, 5] [4, 64, 25, 25] 76,800

│ │ └─BatchNorm2d: 3-6 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-14 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-7 [1, 1] [4, 64, 25, 25] 12,288

│ │ └─BatchNorm2d: 3-8 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-15 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-9 [3, 3] [4, 96, 25, 25] 55,296

│ │ └─BatchNorm2d: 3-10 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-16 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-11 [3, 3] [4, 96, 25, 25] 82,944

│ │ └─BatchNorm2d: 3-12 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-17 -- [4, 32, 25, 25] --

│ │ └─Conv2d: 3-13 [1, 1] [4, 32, 25, 25] 6,144

│ │ └─BatchNorm2d: 3-14 -- [4, 32, 25, 25] 64

├─InceptionA: 1-9 -- [4, 288, 25, 25] --

│ └─BasicConv2d: 2-18 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-15 [1, 1] [4, 64, 25, 25] 16,384

│ │ └─BatchNorm2d: 3-16 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-19 -- [4, 48, 25, 25] --

│ │ └─Conv2d: 3-17 [1, 1] [4, 48, 25, 25] 12,288

│ │ └─BatchNorm2d: 3-18 -- [4, 48, 25, 25] 96

│ └─BasicConv2d: 2-20 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-19 [5, 5] [4, 64, 25, 25] 76,800

│ │ └─BatchNorm2d: 3-20 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-21 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-21 [1, 1] [4, 64, 25, 25] 16,384

│ │ └─BatchNorm2d: 3-22 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-22 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-23 [3, 3] [4, 96, 25, 25] 55,296

│ │ └─BatchNorm2d: 3-24 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-23 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-25 [3, 3] [4, 96, 25, 25] 82,944

│ │ └─BatchNorm2d: 3-26 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-24 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-27 [1, 1] [4, 64, 25, 25] 16,384

│ │ └─BatchNorm2d: 3-28 -- [4, 64, 25, 25] 128

├─InceptionA: 1-10 -- [4, 288, 25, 25] --

│ └─BasicConv2d: 2-25 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-29 [1, 1] [4, 64, 25, 25] 18,432

│ │ └─BatchNorm2d: 3-30 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-26 -- [4, 48, 25, 25] --

│ │ └─Conv2d: 3-31 [1, 1] [4, 48, 25, 25] 13,824

│ │ └─BatchNorm2d: 3-32 -- [4, 48, 25, 25] 96

│ └─BasicConv2d: 2-27 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-33 [5, 5] [4, 64, 25, 25] 76,800

│ │ └─BatchNorm2d: 3-34 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-28 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-35 [1, 1] [4, 64, 25, 25] 18,432

│ │ └─BatchNorm2d: 3-36 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-29 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-37 [3, 3] [4, 96, 25, 25] 55,296

│ │ └─BatchNorm2d: 3-38 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-30 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-39 [3, 3] [4, 96, 25, 25] 82,944

│ │ └─BatchNorm2d: 3-40 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-31 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-41 [1, 1] [4, 64, 25, 25] 18,432

│ │ └─BatchNorm2d: 3-42 -- [4, 64, 25, 25] 128

├─InceptionB: 1-11 -- [4, 768, 12, 12] --

│ └─BasicConv2d: 2-32 -- [4, 384, 12, 12] --

│ │ └─Conv2d: 3-43 [3, 3] [4, 384, 12, 12] 995,328

│ │ └─BatchNorm2d: 3-44 -- [4, 384, 12, 12] 768

│ └─BasicConv2d: 2-33 -- [4, 64, 25, 25] --

│ │ └─Conv2d: 3-45 [1, 1] [4, 64, 25, 25] 18,432

│ │ └─BatchNorm2d: 3-46 -- [4, 64, 25, 25] 128

│ └─BasicConv2d: 2-34 -- [4, 96, 25, 25] --

│ │ └─Conv2d: 3-47 [3, 3] [4, 96, 25, 25] 55,296

│ │ └─BatchNorm2d: 3-48 -- [4, 96, 25, 25] 192

│ └─BasicConv2d: 2-35 -- [4, 96, 12, 12] --

│ │ └─Conv2d: 3-49 [3, 3] [4, 96, 12, 12] 82,944

│ │ └─BatchNorm2d: 3-50 -- [4, 96, 12, 12] 192

├─InceptionC: 1-12 -- [4, 768, 12, 12] --

│ └─BasicConv2d: 2-36 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-51 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-52 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-37 -- [4, 128, 12, 12] --

│ │ └─Conv2d: 3-53 [1, 1] [4, 128, 12, 12] 98,304

│ │ └─BatchNorm2d: 3-54 -- [4, 128, 12, 12] 256

│ └─BasicConv2d: 2-38 -- [4, 128, 12, 12] --

│ │ └─Conv2d: 3-55 [1, 7] [4, 128, 12, 12] 114,688

│ │ └─BatchNorm2d: 3-56 -- [4, 128, 12, 12] 256

│ └─BasicConv2d: 2-39 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-57 [7, 1] [4, 192, 12, 12] 172,032

│ │ └─BatchNorm2d: 3-58 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-40 -- [4, 128, 12, 12] --

│ │ └─Conv2d: 3-59 [1, 1] [4, 128, 12, 12] 98,304

│ │ └─BatchNorm2d: 3-60 -- [4, 128, 12, 12] 256

│ └─BasicConv2d: 2-41 -- [4, 128, 12, 12] --

│ │ └─Conv2d: 3-61 [7, 1] [4, 128, 12, 12] 114,688

│ │ └─BatchNorm2d: 3-62 -- [4, 128, 12, 12] 256

│ └─BasicConv2d: 2-42 -- [4, 128, 12, 12] --

│ │ └─Conv2d: 3-63 [1, 7] [4, 128, 12, 12] 114,688

│ │ └─BatchNorm2d: 3-64 -- [4, 128, 12, 12] 256

│ └─BasicConv2d: 2-43 -- [4, 128, 12, 12] --

│ │ └─Conv2d: 3-65 [7, 1] [4, 128, 12, 12] 114,688

│ │ └─BatchNorm2d: 3-66 -- [4, 128, 12, 12] 256

│ └─BasicConv2d: 2-44 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-67 [1, 7] [4, 192, 12, 12] 172,032

│ │ └─BatchNorm2d: 3-68 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-45 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-69 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-70 -- [4, 192, 12, 12] 384

├─InceptionC: 1-13 -- [4, 768, 12, 12] --

│ └─BasicConv2d: 2-46 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-71 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-72 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-47 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-73 [1, 1] [4, 160, 12, 12] 122,880

│ │ └─BatchNorm2d: 3-74 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-48 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-75 [1, 7] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-76 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-49 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-77 [7, 1] [4, 192, 12, 12] 215,040

│ │ └─BatchNorm2d: 3-78 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-50 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-79 [1, 1] [4, 160, 12, 12] 122,880

│ │ └─BatchNorm2d: 3-80 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-51 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-81 [7, 1] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-82 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-52 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-83 [1, 7] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-84 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-53 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-85 [7, 1] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-86 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-54 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-87 [1, 7] [4, 192, 12, 12] 215,040

│ │ └─BatchNorm2d: 3-88 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-55 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-89 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-90 -- [4, 192, 12, 12] 384

├─InceptionC: 1-14 -- [4, 768, 12, 12] --

│ └─BasicConv2d: 2-56 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-91 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-92 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-57 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-93 [1, 1] [4, 160, 12, 12] 122,880

│ │ └─BatchNorm2d: 3-94 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-58 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-95 [1, 7] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-96 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-59 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-97 [7, 1] [4, 192, 12, 12] 215,040

│ │ └─BatchNorm2d: 3-98 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-60 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-99 [1, 1] [4, 160, 12, 12] 122,880

│ │ └─BatchNorm2d: 3-100 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-61 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-101 [7, 1] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-102 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-62 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-103 [1, 7] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-104 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-63 -- [4, 160, 12, 12] --

│ │ └─Conv2d: 3-105 [7, 1] [4, 160, 12, 12] 179,200

│ │ └─BatchNorm2d: 3-106 -- [4, 160, 12, 12] 320

│ └─BasicConv2d: 2-64 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-107 [1, 7] [4, 192, 12, 12] 215,040

│ │ └─BatchNorm2d: 3-108 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-65 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-109 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-110 -- [4, 192, 12, 12] 384

├─InceptionC: 1-15 -- [4, 768, 12, 12] --

│ └─BasicConv2d: 2-66 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-111 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-112 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-67 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-113 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-114 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-68 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-115 [1, 7] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-116 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-69 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-117 [7, 1] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-118 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-70 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-119 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-120 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-71 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-121 [7, 1] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-122 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-72 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-123 [1, 7] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-124 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-73 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-125 [7, 1] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-126 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-74 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-127 [1, 7] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-128 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-75 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-129 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-130 -- [4, 192, 12, 12] 384

├─InceptionD: 1-16 -- [4, 1280, 5, 5] --

│ └─BasicConv2d: 2-76 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-131 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-132 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-77 -- [4, 320, 5, 5] --

│ │ └─Conv2d: 3-133 [3, 3] [4, 320, 5, 5] 552,960

│ │ └─BatchNorm2d: 3-134 -- [4, 320, 5, 5] 640

│ └─BasicConv2d: 2-78 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-135 [1, 1] [4, 192, 12, 12] 147,456

│ │ └─BatchNorm2d: 3-136 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-79 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-137 [1, 7] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-138 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-80 -- [4, 192, 12, 12] --

│ │ └─Conv2d: 3-139 [7, 1] [4, 192, 12, 12] 258,048

│ │ └─BatchNorm2d: 3-140 -- [4, 192, 12, 12] 384

│ └─BasicConv2d: 2-81 -- [4, 192, 5, 5] --

│ │ └─Conv2d: 3-141 [3, 3] [4, 192, 5, 5] 331,776

│ │ └─BatchNorm2d: 3-142 -- [4, 192, 5, 5] 384

├─InceptionE: 1-17 -- [4, 2048, 5, 5] --

│ └─BasicConv2d: 2-82 -- [4, 320, 5, 5] --

│ │ └─Conv2d: 3-143 [1, 1] [4, 320, 5, 5] 409,600

│ │ └─BatchNorm2d: 3-144 -- [4, 320, 5, 5] 640

│ └─BasicConv2d: 2-83 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-145 [1, 1] [4, 384, 5, 5] 491,520

│ │ └─BatchNorm2d: 3-146 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-84 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-147 [1, 3] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-148 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-85 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-149 [3, 1] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-150 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-86 -- [4, 448, 5, 5] --

│ │ └─Conv2d: 3-151 [1, 1] [4, 448, 5, 5] 573,440

│ │ └─BatchNorm2d: 3-152 -- [4, 448, 5, 5] 896

│ └─BasicConv2d: 2-87 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-153 [3, 3] [4, 384, 5, 5] 1,548,288

│ │ └─BatchNorm2d: 3-154 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-88 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-155 [1, 3] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-156 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-89 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-157 [3, 1] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-158 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-90 -- [4, 192, 5, 5] --

│ │ └─Conv2d: 3-159 [1, 1] [4, 192, 5, 5] 245,760

│ │ └─BatchNorm2d: 3-160 -- [4, 192, 5, 5] 384

├─InceptionE: 1-18 -- [4, 2048, 5, 5] --

│ └─BasicConv2d: 2-91 -- [4, 320, 5, 5] --

│ │ └─Conv2d: 3-161 [1, 1] [4, 320, 5, 5] 655,360

│ │ └─BatchNorm2d: 3-162 -- [4, 320, 5, 5] 640

│ └─BasicConv2d: 2-92 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-163 [1, 1] [4, 384, 5, 5] 786,432

│ │ └─BatchNorm2d: 3-164 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-93 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-165 [1, 3] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-166 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-94 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-167 [3, 1] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-168 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-95 -- [4, 448, 5, 5] --

│ │ └─Conv2d: 3-169 [1, 1] [4, 448, 5, 5] 917,504

│ │ └─BatchNorm2d: 3-170 -- [4, 448, 5, 5] 896

│ └─BasicConv2d: 2-96 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-171 [3, 3] [4, 384, 5, 5] 1,548,288

│ │ └─BatchNorm2d: 3-172 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-97 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-173 [1, 3] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-174 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-98 -- [4, 384, 5, 5] --

│ │ └─Conv2d: 3-175 [3, 1] [4, 384, 5, 5] 442,368

│ │ └─BatchNorm2d: 3-176 -- [4, 384, 5, 5] 768

│ └─BasicConv2d: 2-99 -- [4, 192, 5, 5] --

│ │ └─Conv2d: 3-177 [1, 1] [4, 192, 5, 5] 393,216

│ │ └─BatchNorm2d: 3-178 -- [4, 192, 5, 5] 384

├─AdaptiveAvgPool2d: 1-19 -- [4, 2048, 1, 1] --

├─Dropout: 1-20 -- [4, 2048, 1, 1] --

├─Linear: 1-21 -- [4, 1000] 2,049,000

===================================================================================================================

Total params: 27,161,264

Trainable params: 27,161,264

Non-trainable params: 0

Total mult-adds (Units.GIGABYTES): 11.35

===================================================================================================================

Input size (MB): 2.41

Forward/backward pass size (MB): 297.53

Params size (MB): 95.34

Estimated Total Size (MB): 395.27

===================================================================================================================

Displaying the 04 model.

===================================================================================================================

Layer (type:depth-idx) Kernel Shape Output Shape Param #

===================================================================================================================

ResNet -- [4, 1000] --

├─Conv2d: 1-1 [7, 7] [4, 64, 112, 112] 9,408

├─BatchNorm2d: 1-2 -- [4, 64, 112, 112] 128

├─ReLU: 1-3 -- [4, 64, 112, 112] --

├─MaxPool2d: 1-4 3 [4, 64, 56, 56] --

├─Sequential: 1-5 -- [4, 256, 56, 56] --

│ └─Bottleneck: 2-1 -- [4, 256, 56, 56] --

│ │ └─Conv2d: 3-1 [1, 1] [4, 64, 56, 56] 4,096

│ │ └─BatchNorm2d: 3-2 -- [4, 64, 56, 56] 128

│ │ └─ReLU: 3-3 -- [4, 64, 56, 56] --

│ │ └─Conv2d: 3-4 [3, 3] [4, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-5 -- [4, 64, 56, 56] 128

│ │ └─ReLU: 3-6 -- [4, 64, 56, 56] --

│ │ └─Conv2d: 3-7 [1, 1] [4, 256, 56, 56] 16,384

│ │ └─BatchNorm2d: 3-8 -- [4, 256, 56, 56] 512

│ │ └─Sequential: 3-9 -- [4, 256, 56, 56] 16,896

│ │ └─ReLU: 3-10 -- [4, 256, 56, 56] --

│ └─Bottleneck: 2-2 -- [4, 256, 56, 56] --

│ │ └─Conv2d: 3-11 [1, 1] [4, 64, 56, 56] 16,384

│ │ └─BatchNorm2d: 3-12 -- [4, 64, 56, 56] 128

│ │ └─ReLU: 3-13 -- [4, 64, 56, 56] --

│ │ └─Conv2d: 3-14 [3, 3] [4, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-15 -- [4, 64, 56, 56] 128

│ │ └─ReLU: 3-16 -- [4, 64, 56, 56] --

│ │ └─Conv2d: 3-17 [1, 1] [4, 256, 56, 56] 16,384

│ │ └─BatchNorm2d: 3-18 -- [4, 256, 56, 56] 512

│ │ └─ReLU: 3-19 -- [4, 256, 56, 56] --

│ └─Bottleneck: 2-3 -- [4, 256, 56, 56] --

│ │ └─Conv2d: 3-20 [1, 1] [4, 64, 56, 56] 16,384

│ │ └─BatchNorm2d: 3-21 -- [4, 64, 56, 56] 128

│ │ └─ReLU: 3-22 -- [4, 64, 56, 56] --

│ │ └─Conv2d: 3-23 [3, 3] [4, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-24 -- [4, 64, 56, 56] 128

│ │ └─ReLU: 3-25 -- [4, 64, 56, 56] --

│ │ └─Conv2d: 3-26 [1, 1] [4, 256, 56, 56] 16,384

│ │ └─BatchNorm2d: 3-27 -- [4, 256, 56, 56] 512

│ │ └─ReLU: 3-28 -- [4, 256, 56, 56] --

├─Sequential: 1-6 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-4 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-29 [1, 1] [4, 128, 56, 56] 32,768

│ │ └─BatchNorm2d: 3-30 -- [4, 128, 56, 56] 256

│ │ └─ReLU: 3-31 -- [4, 128, 56, 56] --

│ │ └─Conv2d: 3-32 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-33 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-34 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-35 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-36 -- [4, 512, 28, 28] 1,024

│ │ └─Sequential: 3-37 -- [4, 512, 28, 28] 132,096

│ │ └─ReLU: 3-38 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-5 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-39 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-40 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-41 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-42 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-43 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-44 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-45 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-46 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-47 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-6 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-48 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-49 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-50 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-51 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-52 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-53 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-54 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-55 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-56 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-7 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-57 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-58 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-59 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-60 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-61 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-62 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-63 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-64 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-65 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-8 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-66 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-67 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-68 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-69 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-70 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-71 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-72 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-73 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-74 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-9 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-75 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-76 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-77 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-78 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-79 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-80 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-81 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-82 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-83 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-10 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-84 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-85 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-86 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-87 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-88 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-89 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-90 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-91 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-92 -- [4, 512, 28, 28] --

│ └─Bottleneck: 2-11 -- [4, 512, 28, 28] --

│ │ └─Conv2d: 3-93 [1, 1] [4, 128, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-94 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-95 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-96 [3, 3] [4, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-97 -- [4, 128, 28, 28] 256

│ │ └─ReLU: 3-98 -- [4, 128, 28, 28] --

│ │ └─Conv2d: 3-99 [1, 1] [4, 512, 28, 28] 65,536

│ │ └─BatchNorm2d: 3-100 -- [4, 512, 28, 28] 1,024

│ │ └─ReLU: 3-101 -- [4, 512, 28, 28] --

├─Sequential: 1-7 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-12 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-102 [1, 1] [4, 256, 28, 28] 131,072

│ │ └─BatchNorm2d: 3-103 -- [4, 256, 28, 28] 512

│ │ └─ReLU: 3-104 -- [4, 256, 28, 28] --

│ │ └─Conv2d: 3-105 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-106 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-107 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-108 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-109 -- [4, 1024, 14, 14] 2,048

│ │ └─Sequential: 3-110 -- [4, 1024, 14, 14] 526,336

│ │ └─ReLU: 3-111 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-13 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-112 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-113 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-114 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-115 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-116 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-117 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-118 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-119 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-120 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-14 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-121 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-122 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-123 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-124 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-125 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-126 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-127 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-128 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-129 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-15 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-130 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-131 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-132 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-133 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-134 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-135 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-136 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-137 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-138 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-16 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-139 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-140 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-141 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-142 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-143 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-144 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-145 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-146 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-147 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-17 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-148 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-149 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-150 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-151 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-152 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-153 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-154 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-155 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-156 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-18 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-157 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-158 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-159 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-160 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-161 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-162 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-163 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-164 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-165 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-19 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-166 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-167 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-168 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-169 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-170 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-171 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-172 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-173 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-174 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-20 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-175 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-176 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-177 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-178 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-179 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-180 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-181 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-182 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-183 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-21 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-184 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-185 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-186 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-187 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-188 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-189 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-190 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-191 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-192 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-22 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-193 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-194 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-195 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-196 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-197 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-198 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-199 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-200 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-201 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-23 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-202 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-203 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-204 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-205 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-206 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-207 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-208 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-209 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-210 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-24 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-211 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-212 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-213 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-214 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-215 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-216 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-217 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-218 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-219 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-25 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-220 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-221 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-222 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-223 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-224 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-225 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-226 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-227 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-228 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-26 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-229 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-230 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-231 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-232 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-233 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-234 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-235 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-236 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-237 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-27 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-238 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-239 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-240 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-241 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-242 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-243 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-244 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-245 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-246 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-28 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-247 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-248 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-249 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-250 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-251 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-252 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-253 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-254 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-255 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-29 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-256 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-257 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-258 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-259 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-260 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-261 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-262 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-263 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-264 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-30 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-265 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-266 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-267 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-268 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-269 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-270 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-271 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-272 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-273 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-31 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-274 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-275 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-276 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-277 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-278 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-279 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-280 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-281 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-282 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-32 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-283 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-284 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-285 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-286 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-287 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-288 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-289 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-290 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-291 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-33 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-292 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-293 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-294 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-295 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-296 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-297 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-298 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-299 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-300 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-34 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-301 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-302 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-303 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-304 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-305 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-306 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-307 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-308 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-309 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-35 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-310 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-311 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-312 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-313 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-314 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-315 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-316 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-317 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-318 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-36 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-319 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-320 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-321 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-322 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-323 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-324 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-325 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-326 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-327 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-37 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-328 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-329 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-330 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-331 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-332 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-333 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-334 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-335 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-336 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-38 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-337 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-338 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-339 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-340 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-341 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-342 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-343 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-344 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-345 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-39 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-346 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-347 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-348 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-349 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-350 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-351 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-352 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-353 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-354 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-40 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-355 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-356 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-357 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-358 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-359 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-360 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-361 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-362 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-363 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-41 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-364 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-365 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-366 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-367 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-368 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-369 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-370 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-371 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-372 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-42 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-373 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-374 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-375 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-376 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-377 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-378 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-379 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-380 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-381 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-43 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-382 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-383 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-384 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-385 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-386 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-387 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-388 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-389 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-390 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-44 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-391 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-392 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-393 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-394 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-395 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-396 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-397 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-398 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-399 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-45 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-400 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-401 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-402 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-403 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-404 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-405 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-406 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-407 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-408 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-46 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-409 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-410 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-411 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-412 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-413 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-414 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-415 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-416 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-417 -- [4, 1024, 14, 14] --

│ └─Bottleneck: 2-47 -- [4, 1024, 14, 14] --

│ │ └─Conv2d: 3-418 [1, 1] [4, 256, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-419 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-420 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-421 [3, 3] [4, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-422 -- [4, 256, 14, 14] 512

│ │ └─ReLU: 3-423 -- [4, 256, 14, 14] --

│ │ └─Conv2d: 3-424 [1, 1] [4, 1024, 14, 14] 262,144

│ │ └─BatchNorm2d: 3-425 -- [4, 1024, 14, 14] 2,048

│ │ └─ReLU: 3-426 -- [4, 1024, 14, 14] --

├─Sequential: 1-8 -- [4, 2048, 7, 7] --

│ └─Bottleneck: 2-48 -- [4, 2048, 7, 7] --

│ │ └─Conv2d: 3-427 [1, 1] [4, 512, 14, 14] 524,288

│ │ └─BatchNorm2d: 3-428 -- [4, 512, 14, 14] 1,024

│ │ └─ReLU: 3-429 -- [4, 512, 14, 14] --

│ │ └─Conv2d: 3-430 [3, 3] [4, 512, 7, 7] 2,359,296

│ │ └─BatchNorm2d: 3-431 -- [4, 512, 7, 7] 1,024

│ │ └─ReLU: 3-432 -- [4, 512, 7, 7] --

│ │ └─Conv2d: 3-433 [1, 1] [4, 2048, 7, 7] 1,048,576

│ │ └─BatchNorm2d: 3-434 -- [4, 2048, 7, 7] 4,096

│ │ └─Sequential: 3-435 -- [4, 2048, 7, 7] 2,101,248

│ │ └─ReLU: 3-436 -- [4, 2048, 7, 7] --

│ └─Bottleneck: 2-49 -- [4, 2048, 7, 7] --

│ │ └─Conv2d: 3-437 [1, 1] [4, 512, 7, 7] 1,048,576

│ │ └─BatchNorm2d: 3-438 -- [4, 512, 7, 7] 1,024

│ │ └─ReLU: 3-439 -- [4, 512, 7, 7] --

│ │ └─Conv2d: 3-440 [3, 3] [4, 512, 7, 7] 2,359,296

│ │ └─BatchNorm2d: 3-441 -- [4, 512, 7, 7] 1,024

│ │ └─ReLU: 3-442 -- [4, 512, 7, 7] --

│ │ └─Conv2d: 3-443 [1, 1] [4, 2048, 7, 7] 1,048,576

│ │ └─BatchNorm2d: 3-444 -- [4, 2048, 7, 7] 4,096

│ │ └─ReLU: 3-445 -- [4, 2048, 7, 7] --

│ └─Bottleneck: 2-50 -- [4, 2048, 7, 7] --

│ │ └─Conv2d: 3-446 [1, 1] [4, 512, 7, 7] 1,048,576

│ │ └─BatchNorm2d: 3-447 -- [4, 512, 7, 7] 1,024

│ │ └─ReLU: 3-448 -- [4, 512, 7, 7] --

│ │ └─Conv2d: 3-449 [3, 3] [4, 512, 7, 7] 2,359,296

│ │ └─BatchNorm2d: 3-450 -- [4, 512, 7, 7] 1,024

│ │ └─ReLU: 3-451 -- [4, 512, 7, 7] --

│ │ └─Conv2d: 3-452 [1, 1] [4, 2048, 7, 7] 1,048,576

│ │ └─BatchNorm2d: 3-453 -- [4, 2048, 7, 7] 4,096

│ │ └─ReLU: 3-454 -- [4, 2048, 7, 7] --

├─AdaptiveAvgPool2d: 1-9 -- [4, 2048, 1, 1] --

├─Linear: 1-10 -- [4, 1000] 2,049,000

===================================================================================================================

Total params: 60,192,808

Trainable params: 60,192,808

Non-trainable params: 0

Total mult-adds (Units.GIGABYTES): 46.06

===================================================================================================================

Input size (MB): 2.41

Forward/backward pass size (MB): 1443.50

Params size (MB): 240.77

Estimated Total Size (MB): 1686.67

===================================================================================================================

(!) Compare the

AlexNetstructure to the one on slides.(@) Add a graph per model using TensorBoard.

Build the Model Pre Processing#

The models were trained on ImageNet with the input size of 3 x 224 x 224.

One way to deal with different dimensions of the data set is as following:

Resize the smallest dimension of the image to

224.Crop a square of

224 x 224at the center.

This section implements such pre processing.

(#) One could use PyTorch’s transform functionality as a pre process function.

a=(224/200)*300

# add center crop

336.00000000000006

# Pre Process Image as Transform

vMean = np.array([0.485, 0.456, 0.406])

vStd = np.array([0.229, 0.224, 0.225])

oPreProcess = TorchVisionTrns.Compose([

TorchVisionTrns.ToImage(),

TorchVisionTrns.ToDtype(torch.float32, scale = True),

TorchVisionTrns.Resize(imgSize),

TorchVisionTrns.CenterCrop(imgSize), ##not always working - good for recognition in the center of the image

TorchVisionTrns.Normalize(mean = vMean, std = vStd), ## values from the model documentation par model

])

instead of center crop - can use padding

(?) Explain the transform for images with dimensions:

200x300,250x250,450x400?(#) By training, most models are biased to classify mainly by data on the center of the image.

# Plot the Data

# Plot with the pre process

hF, vHa = plt.subplots(nrows = 1, ncols = numImg, figsize = (3 * numImg, 6))

for ii, hA in enumerate(vHa.flat):

mI = ski.io.imread(L_IMG_URL[ii])

tI = oPreProcess(mI)

mI = tI.numpy()

mI = mI * vStd[:, None, None] + vMean[:, None, None]

mI = np.transpose(mI, (1, 2, 0))

hA.imshow(mI)

hA.tick_params(axis = 'both', left = False, top = False, right = False, bottom = False,

labelleft = False, labeltop = False, labelright = False, labelbottom = False)

hA.grid(False)

Clipping input data to the valid range for imshow with RGB data ([0..1] for floats or [0..255] for integers).

Clipping input data to the valid range for imshow with RGB data ([0..1] for floats or [0..255] for integers).

Clipping input data to the valid range for imshow with RGB data ([0..1] for floats or [0..255] for integers).

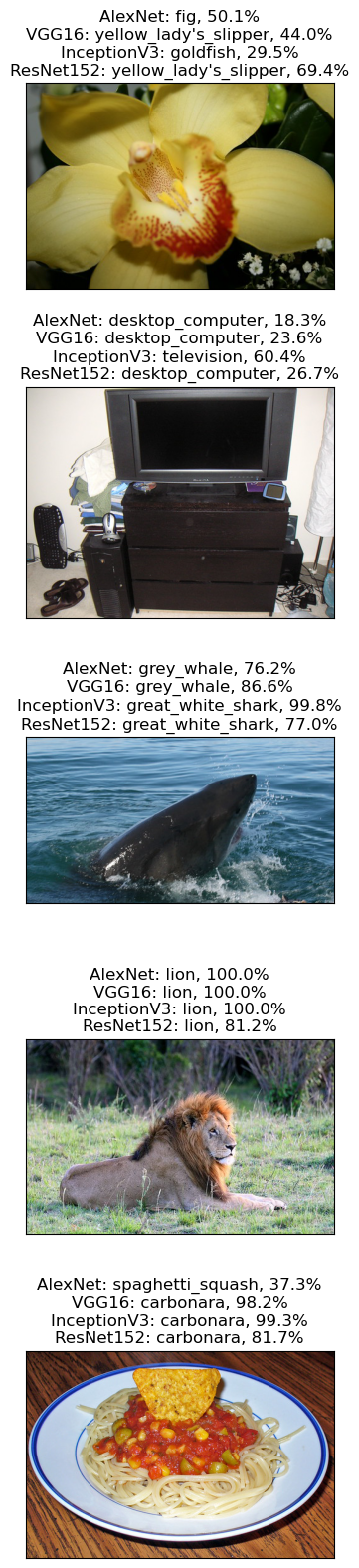

Inference by the Models#

This section use the models to infer the class of the data.

The models are loaded with pre trained weights.

# Inference

hF, vHa = plt.subplots(nrows = numImg, ncols = 1, figsize = (4, 4 * numImg))

for ii, hA in enumerate(vHa.flat):

mI = ski.io.imread(L_IMG_URL[ii])

tI = oPreProcess(mI)

tI = torch.unsqueeze(tI, 0)

titleStr = ''

for jj, (modelName, modelClass, modelWeights) in enumerate(lModels):

oModel = modelClass(weights = modelWeights) ## load the mode = load the weights !!!!

oModel = oModel.eval() #<! Batch Norm / Dropout Layers

oModel = oModel.to('cpu') ## Inference on the cpu

with torch.inference_mode():

vYHat = torch.squeeze(oModel(tI))

vProb = torch.softmax(vYHat, dim = 0) #<! Probabilities

clsIdx = torch.argmax(vYHat)

clsProb = vProb[clsIdx] #<! Probability of the class

titleStr += f'{modelName}: {lClasses[clsIdx]}, {clsProb:0.1%}'

if ((jj + 1) < len(lModels)):

titleStr += '\n'

hA.imshow(mI)

hA.tick_params(axis = 'both', left = False, top = False, right = False, bottom = False,

labelleft = False, labeltop = False, labelright = False, labelbottom = False)

hA.set_title(titleStr)

hA.grid(False)

Downloading: "https://download.pytorch.org/models/alexnet-owt-7be5be79.pth" to /home/vlad/.cache/torch/hub/checkpoints/alexnet-owt-7be5be79.pth

100.0%

Downloading: "https://download.pytorch.org/models/vgg16-397923af.pth" to /home/vlad/.cache/torch/hub/checkpoints/vgg16-397923af.pth

100.0%

Downloading: "https://download.pytorch.org/models/inception_v3_google-0cc3c7bd.pth" to /home/vlad/.cache/torch/hub/checkpoints/inception_v3_google-0cc3c7bd.pth

100.0%

Downloading: "https://download.pytorch.org/models/resnet152-f82ba261.pth" to /home/vlad/.cache/torch/hub/checkpoints/resnet152-f82ba261.pth

100.0%

(?) Models are trained for images of size

224x224.

What will happen if we used pre trained model on images of230x230? What about500x500or64x64?(?) What’s the meaning of the probability? Is it accurate?

(#)The Original GoogleNet has auxiliary logits which match

torchvision.models.googlenet(aux_logits = True).

See GogoleNet Diagram.