boston house Kernel Regression#

Notebook by:

Royi Avital RoyiAvital@fixelalgorithms.com

Revision History#

Version |

Date |

User |

Content / Changes |

|---|---|---|---|

1.0.000 |

07/04/2024 |

Royi Avital |

First version |

# Import Packages

# General Tools

import numpy as np

import scipy as sp

import pandas as pd

# Machine Learning

from sklearn.base import BaseEstimator, RegressorMixin

from sklearn.datasets import fetch_openml

from sklearn.metrics import r2_score

from sklearn.model_selection import KFold

from sklearn.model_selection import cross_val_predict

from sklearn.preprocessing import StandardScaler

# Miscellaneous

import math

import os

from platform import python_version

import random

import timeit

# Typing

from typing import Callable, Dict, List, Optional, Self, Set, Tuple, Union

# Visualization

import matplotlib as mpl

import matplotlib.pyplot as plt

import seaborn as sns

# Jupyter

from IPython import get_ipython

from IPython.display import Image

from IPython.display import display

from ipywidgets import Dropdown, FloatSlider, interact, IntSlider, Layout, SelectionSlider

from ipywidgets import interact

Notations#

(?) Question to answer interactively.

(!) Simple task to add code for the notebook.

(@) Optional / Extra self practice.

(#) Note / Useful resource / Food for thought.

Code Notations:

someVar = 2; #<! Notation for a variable

vVector = np.random.rand(4) #<! Notation for 1D array

mMatrix = np.random.rand(4, 3) #<! Notation for 2D array

tTensor = np.random.rand(4, 3, 2, 3) #<! Notation for nD array (Tensor)

tuTuple = (1, 2, 3) #<! Notation for a tuple

lList = [1, 2, 3] #<! Notation for a list

dDict = {1: 3, 2: 2, 3: 1} #<! Notation for a dictionary

oObj = MyClass() #<! Notation for an object

dfData = pd.DataFrame() #<! Notation for a data frame

dsData = pd.Series() #<! Notation for a series

hObj = plt.Axes() #<! Notation for an object / handler / function handler

Code Exercise#

Single line fill

vallToFill = ???

Multi Line to Fill (At least one)

# You need to start writing

????

Section to Fill

#===========================Fill This===========================#

# 1. Explanation about what to do.

# !! Remarks to follow / take under consideration.

mX = ???

???

#===============================================================#

# Configuration

# %matplotlib inline

seedNum = 512

np.random.seed(seedNum)

random.seed(seedNum)

# Matplotlib default color palette

lMatPltLibclr = ['#1f77b4', '#ff7f0e', '#2ca02c', '#d62728', '#9467bd', '#8c564b', '#e377c2', '#7f7f7f', '#bcbd22', '#17becf']

# sns.set_theme() #>! Apply SeaBorn theme

runInGoogleColab = 'google.colab' in str(get_ipython())

# Constants

FIG_SIZE_DEF = (8, 8)

ELM_SIZE_DEF = 50

CLASS_COLOR = ('b', 'r')

EDGE_COLOR = 'k'

MARKER_SIZE_DEF = 10

LINE_WIDTH_DEF = 2

# Courses Packages

import sys

sys.path.append('../')

sys.path.append('../../')

sys.path.append('../../../')

from utils.DataVisualization import PlotRegressionResults

# General Auxiliary Functions

def CosineKernel( vU: np.ndarray ) -> np.ndarray:

return (np.abs(vU) < 1) * (1 + np.cos(np.pi * vU))

def GaussianKernel( vU: np.ndarray ) -> np.ndarray:

return np.exp(-0.5 * np.square(vU))

def TriangularKernel( vU: np.ndarray ) -> np.ndarray:

return (np.abs(vU) < 1) * (1 - np.abs(vU))

def UniformKernel( vU: np.ndarray ) -> np.ndarray:

return 1 * (np.abs(vU) < 0.5)

Kernel Regression#

In this exercise we’ll build an estimator with the Sci Kit Learn API.

It will be based on the concept of Kernel Regression.

(#) The Kernel Regression is a non parametric method. The optimization is about its hyper parameters.

We’ll us the Boston House Prices Dataset (See also Kaggle - Boston House Prices).

It has 13 features and one target. 2 of the features are categorical features.

The objective is to estimate the MDEV of the estimation by optimizing the following hyper parameters:

The type of the kernel

The

hparameter.

I this exercise we’ll do the following:

Load the

Boston House Prices Datasetdata set usingfetch_openml().Create a an estimator (Regressor) class using SciKit API:

Implement the constructor.

Implement the

fit(),predict()andscore()methods.

Optimize hyper parameters using Leave One Out cross validation.

Display the output of the model.

We should get an R2 score above 0.75.

(#) In order to set the

hparameter, one should have a look on the distance matrix of the data to get the relevant Dynamic Range of values.

# Parameters

lKernelType = ['Cosine', 'Gaussian', 'Triangular', 'Uniform']

#===========================Fill This===========================#

# 1. Set the range of values of `h` (Bandwidth).

lH = list(np.linspace(0.1, 5, 40))

#===============================================================#

lKernels = [('Cosine', CosineKernel), ('Gaussian', GaussianKernel), ('Triangular', TriangularKernel), ('Uniform', UniformKernel)]

# Data Visualization

gridNoiseStd = 0.05

numGridPts = 250

Generate / Load Data#

Loading the Boston House Prices Dataset (See also Kaggle - Boston House Prices).

The data has 13 features and one target. 2 of the features are categorical features.

# Failing SSL Certificate ('[SSL: CERTIFICATE_VERIFY_FAILED')

# In case `fetch_openml()` fails with SSL Certificate issue, run this.

# import ssl

# ssl._create_default_https_context = ssl._create_unverified_context

# Load Data

dfX, dsY = fetch_openml('boston', version = 1, return_X_y = True, as_frame = True, parser = 'auto')

print(f'The features data shape: {dfX.shape}')

print(f'The labels data shape: {dsY.shape}')

The features data shape: (506, 13)

The labels data shape: (506,)

# The Features Data

dfX.head(10)

| CRIM | ZN | INDUS | CHAS | NOX | RM | AGE | DIS | RAD | TAX | PTRATIO | B | LSTAT | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.00632 | 18.0 | 2.31 | 0 | 0.538 | 6.575 | 65.2 | 4.0900 | 1 | 296.0 | 15.3 | 396.90 | 4.98 |

| 1 | 0.02731 | 0.0 | 7.07 | 0 | 0.469 | 6.421 | 78.9 | 4.9671 | 2 | 242.0 | 17.8 | 396.90 | 9.14 |

| 2 | 0.02729 | 0.0 | 7.07 | 0 | 0.469 | 7.185 | 61.1 | 4.9671 | 2 | 242.0 | 17.8 | 392.83 | 4.03 |

| 3 | 0.03237 | 0.0 | 2.18 | 0 | 0.458 | 6.998 | 45.8 | 6.0622 | 3 | 222.0 | 18.7 | 394.63 | 2.94 |

| 4 | 0.06905 | 0.0 | 2.18 | 0 | 0.458 | 7.147 | 54.2 | 6.0622 | 3 | 222.0 | 18.7 | 396.90 | 5.33 |

| 5 | 0.02985 | 0.0 | 2.18 | 0 | 0.458 | 6.430 | 58.7 | 6.0622 | 3 | 222.0 | 18.7 | 394.12 | 5.21 |

| 6 | 0.08829 | 12.5 | 7.87 | 0 | 0.524 | 6.012 | 66.6 | 5.5605 | 5 | 311.0 | 15.2 | 395.60 | 12.43 |

| 7 | 0.14455 | 12.5 | 7.87 | 0 | 0.524 | 6.172 | 96.1 | 5.9505 | 5 | 311.0 | 15.2 | 396.90 | 19.15 |

| 8 | 0.21124 | 12.5 | 7.87 | 0 | 0.524 | 5.631 | 100.0 | 6.0821 | 5 | 311.0 | 15.2 | 386.63 | 29.93 |

| 9 | 0.17004 | 12.5 | 7.87 | 0 | 0.524 | 6.004 | 85.9 | 6.5921 | 5 | 311.0 | 15.2 | 386.71 | 17.10 |

# The Labels Data

dsY.head(10)

0 24.0

1 21.6

2 34.7

3 33.4

4 36.2

5 28.7

6 22.9

7 27.1

8 16.5

9 18.9

Name: MEDV, dtype: float64

# Info o the Data

dfX.info()

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 506 entries, 0 to 505

Data columns (total 13 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 CRIM 506 non-null float64

1 ZN 506 non-null float64

2 INDUS 506 non-null float64

3 CHAS 506 non-null category

4 NOX 506 non-null float64

5 RM 506 non-null float64

6 AGE 506 non-null float64

7 DIS 506 non-null float64

8 RAD 506 non-null category

9 TAX 506 non-null float64

10 PTRATIO 506 non-null float64

11 B 506 non-null float64

12 LSTAT 506 non-null float64

dtypes: category(2), float64(11)

memory usage: 45.1 KB

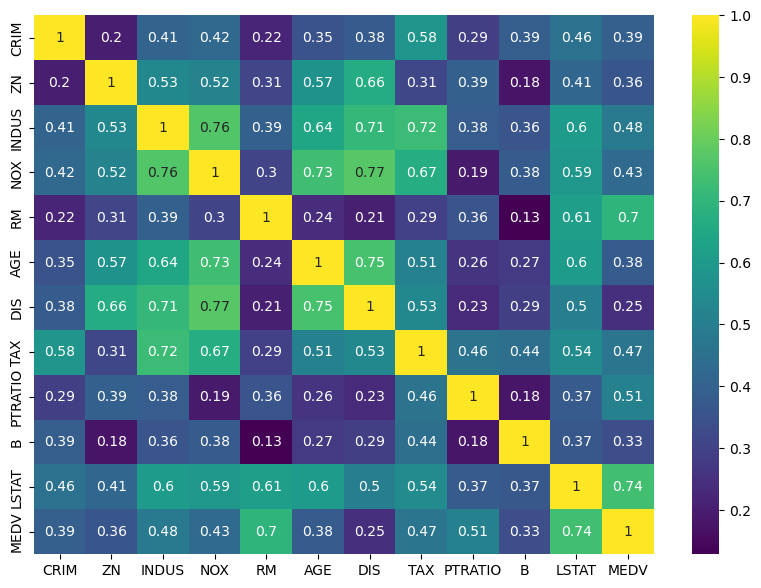

Plot Data#

# Plot the Data

# We'll display the correlation matrix of the data.

# We'll add the target variable as the last variable (`MEDV`).

dfData = pd.concat([dfX, dsY], axis = 1)

hF, hA = plt.subplots(figsize = (10, 7))

sns.heatmap(dfData.corr(numeric_only = True).abs(), annot = True, cmap = 'viridis', ax = hA)

plt.show()

(?) Would you use the above to drop some features?

(?) Do we see all features above? How should we handle those missing?

Training / Test Data#

# The Training Data

#===========================Fill This===========================#

# 1. Convert the `dfX` data frame into a matrix `mX`. Drop the categorical columns.

# 2. Convert the `dsY` data frame into a vector `vY`.

# !! You may use the `to_numpy()` method useful.

mX = dfX.drop(columns = ['CHAS', 'RAD']).to_numpy() #<! Drop the categorical data

vY = dsY.to_numpy()

#===============================================================#

print(f'type of dsY: {type(dsY)}')

print(f'type of vY: {type(vY)}')

print(f'The features data shape: {mX.shape}')

print(f'The labels data shape: {vY.shape}')

type of dsY: <class 'pandas.core.series.Series'>

type of vY: <class 'numpy.ndarray'>

The features data shape: (506, 11)

The labels data shape: (506,)

(#) We dropped the

CHASfeature which is binary.

Binary features are like One Hot Encoding, so we could keep it.(?) Why are binary features good as input while multi value categorical are not? Think about metrics.

# Standardizing the Data

# Since we use `h` it makes sense to keep the dynamic range fo values in tact.

# In this case we'll center the data and normalize to have a unit standard deviation.

#===========================Fill This===========================#

# 1. Construct the scaler using `StandardScaler` class.

# 2. Apply the scaler on the data.

oStdScaler = StandardScaler()

mX = oStdScaler.fit_transform(mX)

#===============================================================#

Kernel Regressor#

The kernel regression operation is defined by:

Where \({w}_{x} \left( {x}_{i} \right) = k \left( \frac{ x - x_{i} }{ h } \right)\).

In this exercise we’ll use Leave One Out validation policy with the cross_val_predict() function.

Kernel Regression Estimator#

We could create the linear polynomial fit estimator using a Pipeline of PolynomialFeatures and LinearRegression.

Yet since this is a simple task it is a good opportunity to exercise the creation of a SciKit Estimator.

We need to provide 4 main methods:

The

__init()__Method: The constructor of the object. It should set the kernel type and parameterh.The

fit()Method: The pre processing phase. It keeps a copy of the fit data. It should set thehparameter if not set.The

predict()Method: Prediction of the values.The

score()Method: Calculates the R2 score.

(#) Make sure you read and understand the

ApplyKernelRegression()function below.

# Apply Kernel Regression

# Applies the regression given a callable kernel.

# It avoids division by 0 in case no reference points are given within the kernel domain.

def ApplyKernelRegression( hKernel: Callable[np.ndarray, np.ndarray], paramH: float, mG: np.ndarray, vY: np.ndarray, mX: np.ndarray, metricType: str = 'euclidean', zeroThr: float = 1e-9 ) -> np.ndarray:

mD = sp.spatial.distance.cdist(mX, mG, metric = metricType) ## בomputes the distance between each point

mW = hKernel(mD / paramH)

vK = mW @ vY #<! For numerical stability, removing almost zero values

vW = np.sum(mW, axis = 1)

vI = np.abs(vW) < zeroThr #<! Calculate only when there's real data

vK[vI] = 0

vW[vI] = 1 #<! Remove cases of dividing by 0

vYPred = vK / vW

return vYPred

# The Kernel Regressor Class

class KernelRegressor(RegressorMixin, BaseEstimator):

def __init__(self, kernelType: str = 'Gaussian', paramH: float = None, metricType: str = 'euclidean', lKernels: List = lKernels):

#===========================Fill This===========================#

# 1. Add `kernelType` as an attribute of the object.

# 2. Define the kernel from `lKernels` as `self.hKernel`.

# 3. Add `paramH` as an attribute of the object.

# !! Verify the input string of the kernel is within `lKernels`.

self.kernelType = kernelType

hKernel = None

for tKernel in lKernels:

if tKernel[0] == kernelType:

hKernel = tKernel[1]

break

if hKernel is not None:

self.hKernel = hKernel

else:

raise ValueError(f'The kernel in kernelType = {kernelType} is not in lKernels.')

self.paramH = paramH

#===============================================================#

# We must set all input parameters as attributes

self.metricType = metricType

self.lKernels = lKernels

def fit(self, mX: np.ndarray, vY: np.ndarray) -> Self:

if np.ndim(mX) != 2:

raise ValueError(f'The input `mX` must be an array like of size (n_samples, n_features) !')

if mX.shape[0] != vY.shape[0]:

raise ValueError(f'The input `mX` must be an array like of size (n_samples, n_features) and `vY` must be (n_samples) !')

#===========================Fill This===========================#

# 1. Extract the number of samples.

# 2. Set the bandwidth using Silverman's rule of thumb if it is not set (`None`).

# 3. Keep a copy of `mX` as a reference grid of features `mG`.

# 4. Keep a copy of `vY` as a reference values.

numSamples = mX.shape[0]

if self.paramH is None:

# Using Silverman's rule of thumb.

# It is optimized for Density Estimation for Univariate Gaussian like data.

σ = np.sqrt(np.sum(np.sqaure(mX - np.mean(mX, axis = 0))))

self.paramH = 1.06 * σ * (numSamples ** (-0.2))

self.mG = mX.copy() #<! Copy!

self.vY = vY.copy() #<! Copy!

#===============================================================#

return self

def predict(self, mX: np.ndarray) -> np.ndarray:

if np.ndim(mX) != 2:

raise ValueError(f'The input `mX` must be an array like of size (n_samples, n_features) !')

if mX.shape[1] != self.mG.shape[1]:

raise ValueError(f'The input `mX` must be an array like of size (n_samples, n_features) where `n_features` matches the number of feature in `fit()` !')

return ApplyKernelRegression(self.hKernel, self.paramH, self.mG, self.vY, mX, self.metricType)

def score(self, mX: np.ndarray, vY: np.ndarray) -> np.float_:

# Return the R2 as the score

if (np.size(vY) != np.size(mX, axis = 0)):

raise ValueError(f'The number of samples in `mX` must match the number of labels in `vY`.')

#===========================Fill This===========================#

# 1. Apply the prediction on the input features.

# 2. Calculate the R2 score (You may use `r2_score()`).

vYPred = self.predict(mX)

valR2 = r2_score(vY, vYPred)

#===============================================================#

return valR2

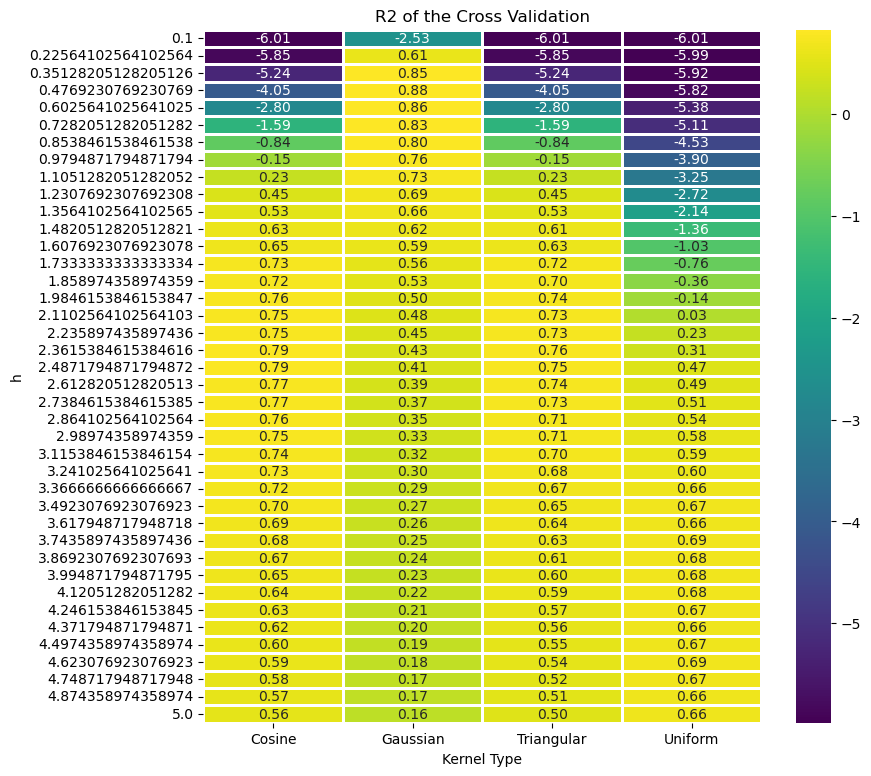

Train a Model and Optimize Hyper Parameters#

In this section we’ll optimize the model according to the R2 score.

We’ll use the r2_score() function to calculate the score.

The process to optimize the Hyper Parameters will be as following:

Build a data frame to keep the scoring of the different hyper parameters combination.

Optimize the model:

Construct a model using the current combination of hyper parameters.

Apply a cross validation process to predict the data using

cross_val_predict().As the cross validation iterator (The

cvparameter) useKFoldto implement Leave One Out policy.

Calculate the score of the predicted classes.

Store the result in the performance data frame.

(?) While the

R2score is used to optimize the Hyper Parameter, what loss is used to optimize the model?

# Creating the Data Frame

#===========================Fill This===========================#

# 1. Calculate the number of combinations.

# 2. Create a nested loop to create the combinations between the parameters.

# 3. Store the combinations as the columns of a data frame.

# For Advanced Python users: Use iteration tools for create the cartesian product

numComb = len(lKernelType) * len(lH)

dData = {'Kernel Type': [], 'h': [], 'R2': [0.0] * numComb}

for ii, kernelType in enumerate(lKernelType):

for jj, paramH in enumerate(lH):

dData['Kernel Type'].append(kernelType)

dData['h'].append(paramH)

#===============================================================#

dfModelScore = pd.DataFrame(data = dData)

dfModelScore

| Kernel Type | h | R2 | |

|---|---|---|---|

| 0 | Cosine | 0.100000 | 0.0 |

| 1 | Cosine | 0.225641 | 0.0 |

| 2 | Cosine | 0.351282 | 0.0 |

| 3 | Cosine | 0.476923 | 0.0 |

| 4 | Cosine | 0.602564 | 0.0 |

| ... | ... | ... | ... |

| 155 | Uniform | 4.497436 | 0.0 |

| 156 | Uniform | 4.623077 | 0.0 |

| 157 | Uniform | 4.748718 | 0.0 |

| 158 | Uniform | 4.874359 | 0.0 |

| 159 | Uniform | 5.000000 | 0.0 |

160 rows × 3 columns

# Optimize the Model

#===========================Fill This===========================#

# 1. Iterate over each row of the data frame `dfModelScore`. Each row defines the hyper parameters.

# 2. Construct the model.

# 3. Train it on the Train Data Set.

# 4. Calculate the score.

# 5. Store the score into the data frame column.

for ii in range(numComb):

kernelType = dfModelScore.loc[ii, 'Kernel Type']

paramH = dfModelScore.loc[ii, 'h']

print(f'Processing model {ii + 1:03d} out of {numComb} with `Kernel Type` = {kernelType} and `h` = {paramH}.')

oKerReg = KernelRegressor(kernelType = kernelType, paramH = paramH)

vYPred = cross_val_predict(oKerReg, mX, vY, cv = KFold(n_splits = mX.shape[0]))

scoreR2 = r2_score(vY, vYPred)

dfModelScore.loc[ii, 'R2'] = scoreR2

print(f'Finished processing model {ii + 1:03d} with `R2 = {scoreR2}.')

#===============================================================#

Processing model 001 out of 160 with `Kernel Type` = Cosine and `h` = 0.1.

Finished processing model 001 with `R2 = -6.0143334549242375.

Processing model 002 out of 160 with `Kernel Type` = Cosine and `h` = 0.22564102564102564.

Finished processing model 002 with `R2 = -5.846558840333477.

Processing model 003 out of 160 with `Kernel Type` = Cosine and `h` = 0.35128205128205126.

Finished processing model 003 with `R2 = -5.240863527423735.

Processing model 004 out of 160 with `Kernel Type` = Cosine and `h` = 0.4769230769230769.

Finished processing model 004 with `R2 = -4.047268714522483.

Processing model 005 out of 160 with `Kernel Type` = Cosine and `h` = 0.6025641025641025.

Finished processing model 005 with `R2 = -2.799042623359844.

Processing model 006 out of 160 with `Kernel Type` = Cosine and `h` = 0.7282051282051282.

Finished processing model 006 with `R2 = -1.586844260286838.

Processing model 007 out of 160 with `Kernel Type` = Cosine and `h` = 0.8538461538461538.

Finished processing model 007 with `R2 = -0.8421109844335779.

Processing model 008 out of 160 with `Kernel Type` = Cosine and `h` = 0.9794871794871794.

Finished processing model 008 with `R2 = -0.14865071561559495.

Processing model 009 out of 160 with `Kernel Type` = Cosine and `h` = 1.1051282051282052.

Finished processing model 009 with `R2 = 0.23076458838521274.

Processing model 010 out of 160 with `Kernel Type` = Cosine and `h` = 1.2307692307692308.

Finished processing model 010 with `R2 = 0.4480127226220688.

Processing model 011 out of 160 with `Kernel Type` = Cosine and `h` = 1.3564102564102565.

Finished processing model 011 with `R2 = 0.5327579280461063.

Processing model 012 out of 160 with `Kernel Type` = Cosine and `h` = 1.4820512820512821.

Finished processing model 012 with `R2 = 0.6252353748703912.

Processing model 013 out of 160 with `Kernel Type` = Cosine and `h` = 1.6076923076923078.

Finished processing model 013 with `R2 = 0.6465126775147094.

Processing model 014 out of 160 with `Kernel Type` = Cosine and `h` = 1.7333333333333334.

Finished processing model 014 with `R2 = 0.7332248763819829.

Processing model 015 out of 160 with `Kernel Type` = Cosine and `h` = 1.858974358974359.

Finished processing model 015 with `R2 = 0.7233313282212972.

Processing model 016 out of 160 with `Kernel Type` = Cosine and `h` = 1.9846153846153847.

Finished processing model 016 with `R2 = 0.7569323380916396.

Processing model 017 out of 160 with `Kernel Type` = Cosine and `h` = 2.1102564102564103.

Finished processing model 017 with `R2 = 0.7544750404481336.

Processing model 018 out of 160 with `Kernel Type` = Cosine and `h` = 2.235897435897436.

Finished processing model 018 with `R2 = 0.7510287664109137.

Processing model 019 out of 160 with `Kernel Type` = Cosine and `h` = 2.3615384615384616.

Finished processing model 019 with `R2 = 0.7897250128557816.

Processing model 020 out of 160 with `Kernel Type` = Cosine and `h` = 2.4871794871794872.

Finished processing model 020 with `R2 = 0.785512883828621.

Processing model 021 out of 160 with `Kernel Type` = Cosine and `h` = 2.612820512820513.

Finished processing model 021 with `R2 = 0.7725739577767134.

Processing model 022 out of 160 with `Kernel Type` = Cosine and `h` = 2.7384615384615385.

Finished processing model 022 with `R2 = 0.7699030815140957.

Processing model 023 out of 160 with `Kernel Type` = Cosine and `h` = 2.864102564102564.

Finished processing model 023 with `R2 = 0.7561766909498184.

Processing model 024 out of 160 with `Kernel Type` = Cosine and `h` = 2.98974358974359.

Finished processing model 024 with `R2 = 0.7531621347762059.

Processing model 025 out of 160 with `Kernel Type` = Cosine and `h` = 3.1153846153846154.

Finished processing model 025 with `R2 = 0.7413572132667272.

Processing model 026 out of 160 with `Kernel Type` = Cosine and `h` = 3.241025641025641.

Finished processing model 026 with `R2 = 0.7297243688576989.

Processing model 027 out of 160 with `Kernel Type` = Cosine and `h` = 3.3666666666666667.

Finished processing model 027 with `R2 = 0.716567185077525.

Processing model 028 out of 160 with `Kernel Type` = Cosine and `h` = 3.4923076923076923.

Finished processing model 028 with `R2 = 0.703356696576499.

Processing model 029 out of 160 with `Kernel Type` = Cosine and `h` = 3.617948717948718.

Finished processing model 029 with `R2 = 0.6904074176636679.

Processing model 030 out of 160 with `Kernel Type` = Cosine and `h` = 3.7435897435897436.

Finished processing model 030 with `R2 = 0.6777214975395125.

Processing model 031 out of 160 with `Kernel Type` = Cosine and `h` = 3.8692307692307693.

Finished processing model 031 with `R2 = 0.6651344083668558.

Processing model 032 out of 160 with `Kernel Type` = Cosine and `h` = 3.994871794871795.

Finished processing model 032 with `R2 = 0.6526966301628636.

Processing model 033 out of 160 with `Kernel Type` = Cosine and `h` = 4.12051282051282.

Finished processing model 033 with `R2 = 0.6404700344330101.

Processing model 034 out of 160 with `Kernel Type` = Cosine and `h` = 4.246153846153845.

Finished processing model 034 with `R2 = 0.6284372802392036.

Processing model 035 out of 160 with `Kernel Type` = Cosine and `h` = 4.371794871794871.

Finished processing model 035 with `R2 = 0.6165398252122973.

Processing model 036 out of 160 with `Kernel Type` = Cosine and `h` = 4.4974358974358974.

Finished processing model 036 with `R2 = 0.6047463834574953.

Processing model 037 out of 160 with `Kernel Type` = Cosine and `h` = 4.623076923076923.

Finished processing model 037 with `R2 = 0.5930542649867989.

Processing model 038 out of 160 with `Kernel Type` = Cosine and `h` = 4.748717948717948.

Finished processing model 038 with `R2 = 0.5814850809414163.

Processing model 039 out of 160 with `Kernel Type` = Cosine and `h` = 4.874358974358974.

Finished processing model 039 with `R2 = 0.5700672498715097.

Processing model 040 out of 160 with `Kernel Type` = Cosine and `h` = 5.0.

Finished processing model 040 with `R2 = 0.5588220773671109.

Processing model 041 out of 160 with `Kernel Type` = Gaussian and `h` = 0.1.

Finished processing model 041 with `R2 = -2.5275364128182267.

Processing model 042 out of 160 with `Kernel Type` = Gaussian and `h` = 0.22564102564102564.

Finished processing model 042 with `R2 = 0.6121394841032461.

Processing model 043 out of 160 with `Kernel Type` = Gaussian and `h` = 0.35128205128205126.

Finished processing model 043 with `R2 = 0.8465883983963417.

Processing model 044 out of 160 with `Kernel Type` = Gaussian and `h` = 0.4769230769230769.

Finished processing model 044 with `R2 = 0.8795229475835388.

Processing model 045 out of 160 with `Kernel Type` = Gaussian and `h` = 0.6025641025641025.

Finished processing model 045 with `R2 = 0.8610906451087534.

Processing model 046 out of 160 with `Kernel Type` = Gaussian and `h` = 0.7282051282051282.

Finished processing model 046 with `R2 = 0.8318094416528194.

Processing model 047 out of 160 with `Kernel Type` = Gaussian and `h` = 0.8538461538461538.

Finished processing model 047 with `R2 = 0.7979584483589652.

Processing model 048 out of 160 with `Kernel Type` = Gaussian and `h` = 0.9794871794871794.

Finished processing model 048 with `R2 = 0.763546154405765.

Processing model 049 out of 160 with `Kernel Type` = Gaussian and `h` = 1.1051282051282052.

Finished processing model 049 with `R2 = 0.7285570311841791.

Processing model 050 out of 160 with `Kernel Type` = Gaussian and `h` = 1.2307692307692308.

Finished processing model 050 with `R2 = 0.6924719067414478.

Processing model 051 out of 160 with `Kernel Type` = Gaussian and `h` = 1.3564102564102565.

Finished processing model 051 with `R2 = 0.6562155118693755.

Processing model 052 out of 160 with `Kernel Type` = Gaussian and `h` = 1.4820512820512821.

Finished processing model 052 with `R2 = 0.6211047647293036.

Processing model 053 out of 160 with `Kernel Type` = Gaussian and `h` = 1.6076923076923078.

Finished processing model 053 with `R2 = 0.5879243957121196.

Processing model 054 out of 160 with `Kernel Type` = Gaussian and `h` = 1.7333333333333334.

Finished processing model 054 with `R2 = 0.5569001059282681.

Processing model 055 out of 160 with `Kernel Type` = Gaussian and `h` = 1.858974358974359.

Finished processing model 055 with `R2 = 0.5279554391630081.

Processing model 056 out of 160 with `Kernel Type` = Gaussian and `h` = 1.9846153846153847.

Finished processing model 056 with `R2 = 0.5009064084845636.

Processing model 057 out of 160 with `Kernel Type` = Gaussian and `h` = 2.1102564102564103.

Finished processing model 057 with `R2 = 0.4755563220273633.

Processing model 058 out of 160 with `Kernel Type` = Gaussian and `h` = 2.235897435897436.

Finished processing model 058 with `R2 = 0.4517308104529788.

Processing model 059 out of 160 with `Kernel Type` = Gaussian and `h` = 2.3615384615384616.

Finished processing model 059 with `R2 = 0.4292841479700531.

Processing model 060 out of 160 with `Kernel Type` = Gaussian and `h` = 2.4871794871794872.

Finished processing model 060 with `R2 = 0.4080930081989712.

Processing model 061 out of 160 with `Kernel Type` = Gaussian and `h` = 2.612820512820513.

Finished processing model 061 with `R2 = 0.38804860047504375.

Processing model 062 out of 160 with `Kernel Type` = Gaussian and `h` = 2.7384615384615385.

Finished processing model 062 with `R2 = 0.3690551695439902.

Processing model 063 out of 160 with `Kernel Type` = Gaussian and `h` = 2.864102564102564.

Finished processing model 063 with `R2 = 0.35103493546629017.

Processing model 064 out of 160 with `Kernel Type` = Gaussian and `h` = 2.98974358974359.

Finished processing model 064 with `R2 = 0.33393186670640873.

Processing model 065 out of 160 with `Kernel Type` = Gaussian and `h` = 3.1153846153846154.

Finished processing model 065 with `R2 = 0.3177084744299288.

Processing model 066 out of 160 with `Kernel Type` = Gaussian and `h` = 3.241025641025641.

Finished processing model 066 with `R2 = 0.3023375508698024.

Processing model 067 out of 160 with `Kernel Type` = Gaussian and `h` = 3.3666666666666667.

Finished processing model 067 with `R2 = 0.287794207769103.

Processing model 068 out of 160 with `Kernel Type` = Gaussian and `h` = 3.4923076923076923.

Finished processing model 068 with `R2 = 0.2740512413202366.

Processing model 069 out of 160 with `Kernel Type` = Gaussian and `h` = 3.617948717948718.

Finished processing model 069 with `R2 = 0.2610777131888252.

Processing model 070 out of 160 with `Kernel Type` = Gaussian and `h` = 3.7435897435897436.

Finished processing model 070 with `R2 = 0.24883939539128808.

Processing model 071 out of 160 with `Kernel Type` = Gaussian and `h` = 3.8692307692307693.

Finished processing model 071 with `R2 = 0.23729990053889138.

Processing model 072 out of 160 with `Kernel Type` = Gaussian and `h` = 3.994871794871795.

Finished processing model 072 with `R2 = 0.2264218506463629.

Processing model 073 out of 160 with `Kernel Type` = Gaussian and `h` = 4.12051282051282.

Finished processing model 073 with `R2 = 0.21616783588512878.

Processing model 074 out of 160 with `Kernel Type` = Gaussian and `h` = 4.246153846153845.

Finished processing model 074 with `R2 = 0.20650111798531423.

Processing model 075 out of 160 with `Kernel Type` = Gaussian and `h` = 4.371794871794871.

Finished processing model 075 with `R2 = 0.19738611077773383.

Processing model 076 out of 160 with `Kernel Type` = Gaussian and `h` = 4.4974358974358974.

Finished processing model 076 with `R2 = 0.18878868848289632.

Processing model 077 out of 160 with `Kernel Type` = Gaussian and `h` = 4.623076923076923.

Finished processing model 077 with `R2 = 0.1806763681255773.

Processing model 078 out of 160 with `Kernel Type` = Gaussian and `h` = 4.748717948717948.

Finished processing model 078 with `R2 = 0.17301840253599154.

Processing model 079 out of 160 with `Kernel Type` = Gaussian and `h` = 4.874358974358974.

Finished processing model 079 with `R2 = 0.16578581086999622.

Processing model 080 out of 160 with `Kernel Type` = Gaussian and `h` = 5.0.

Finished processing model 080 with `R2 = 0.15895136604225946.

Processing model 081 out of 160 with `Kernel Type` = Triangular and `h` = 0.1.

Finished processing model 081 with `R2 = -6.0143334549242375.

Processing model 082 out of 160 with `Kernel Type` = Triangular and `h` = 0.22564102564102564.

Finished processing model 082 with `R2 = -5.846558840333477.

Processing model 083 out of 160 with `Kernel Type` = Triangular and `h` = 0.35128205128205126.

Finished processing model 083 with `R2 = -5.240860967759973.

Processing model 084 out of 160 with `Kernel Type` = Triangular and `h` = 0.4769230769230769.

Finished processing model 084 with `R2 = -4.047132482669339.

Processing model 085 out of 160 with `Kernel Type` = Triangular and `h` = 0.6025641025641025.

Finished processing model 085 with `R2 = -2.798296071014598.

Processing model 086 out of 160 with `Kernel Type` = Triangular and `h` = 0.7282051282051282.

Finished processing model 086 with `R2 = -1.5860891319986843.

Processing model 087 out of 160 with `Kernel Type` = Triangular and `h` = 0.8538461538461538.

Finished processing model 087 with `R2 = -0.8369140976028091.

Processing model 088 out of 160 with `Kernel Type` = Triangular and `h` = 0.9794871794871794.

Finished processing model 088 with `R2 = -0.14691248029552173.

Processing model 089 out of 160 with `Kernel Type` = Triangular and `h` = 1.1051282051282052.

Finished processing model 089 with `R2 = 0.2314432624441468.

Processing model 090 out of 160 with `Kernel Type` = Triangular and `h` = 1.2307692307692308.

Finished processing model 090 with `R2 = 0.4484890155482364.

Processing model 091 out of 160 with `Kernel Type` = Triangular and `h` = 1.3564102564102565.

Finished processing model 091 with `R2 = 0.5285056282605223.

Processing model 092 out of 160 with `Kernel Type` = Triangular and `h` = 1.4820512820512821.

Finished processing model 092 with `R2 = 0.6146724163910834.

Processing model 093 out of 160 with `Kernel Type` = Triangular and `h` = 1.6076923076923078.

Finished processing model 093 with `R2 = 0.6334968063845496.

Processing model 094 out of 160 with `Kernel Type` = Triangular and `h` = 1.7333333333333334.

Finished processing model 094 with `R2 = 0.7168672666217224.

Processing model 095 out of 160 with `Kernel Type` = Triangular and `h` = 1.858974358974359.

Finished processing model 095 with `R2 = 0.7048988851045139.

Processing model 096 out of 160 with `Kernel Type` = Triangular and `h` = 1.9846153846153847.

Finished processing model 096 with `R2 = 0.7353543093661505.

Processing model 097 out of 160 with `Kernel Type` = Triangular and `h` = 2.1102564102564103.

Finished processing model 097 with `R2 = 0.731743174571343.

Processing model 098 out of 160 with `Kernel Type` = Triangular and `h` = 2.235897435897436.

Finished processing model 098 with `R2 = 0.7257535538108972.

Processing model 099 out of 160 with `Kernel Type` = Triangular and `h` = 2.3615384615384616.

Finished processing model 099 with `R2 = 0.7600464443044005.

Processing model 100 out of 160 with `Kernel Type` = Triangular and `h` = 2.4871794871794872.

Finished processing model 100 with `R2 = 0.7516075941372972.

Processing model 101 out of 160 with `Kernel Type` = Triangular and `h` = 2.612820512820513.

Finished processing model 101 with `R2 = 0.7354764791117783.

Processing model 102 out of 160 with `Kernel Type` = Triangular and `h` = 2.7384615384615385.

Finished processing model 102 with `R2 = 0.729643990874307.

Processing model 103 out of 160 with `Kernel Type` = Triangular and `h` = 2.864102564102564.

Finished processing model 103 with `R2 = 0.7122406855468462.

Processing model 104 out of 160 with `Kernel Type` = Triangular and `h` = 2.98974358974359.

Finished processing model 104 with `R2 = 0.7084312619551112.

Processing model 105 out of 160 with `Kernel Type` = Triangular and `h` = 3.1153846153846154.

Finished processing model 105 with `R2 = 0.6957942913842284.

Processing model 106 out of 160 with `Kernel Type` = Triangular and `h` = 3.241025641025641.

Finished processing model 106 with `R2 = 0.6831878437097254.

Processing model 107 out of 160 with `Kernel Type` = Triangular and `h` = 3.3666666666666667.

Finished processing model 107 with `R2 = 0.66854560188787.

Processing model 108 out of 160 with `Kernel Type` = Triangular and `h` = 3.4923076923076923.

Finished processing model 108 with `R2 = 0.6543497241512761.

Processing model 109 out of 160 with `Kernel Type` = Triangular and `h` = 3.617948717948718.

Finished processing model 109 with `R2 = 0.6407772096181051.

Processing model 110 out of 160 with `Kernel Type` = Triangular and `h` = 3.7435897435897436.

Finished processing model 110 with `R2 = 0.627201185816792.

Processing model 111 out of 160 with `Kernel Type` = Triangular and `h` = 3.8692307692307693.

Finished processing model 111 with `R2 = 0.613350105722119.

Processing model 112 out of 160 with `Kernel Type` = Triangular and `h` = 3.994871794871795.

Finished processing model 112 with `R2 = 0.6001586098621644.

Processing model 113 out of 160 with `Kernel Type` = Triangular and `h` = 4.12051282051282.

Finished processing model 113 with `R2 = 0.5871945657792486.

Processing model 114 out of 160 with `Kernel Type` = Triangular and `h` = 4.246153846153845.

Finished processing model 114 with `R2 = 0.5744083988396895.

Processing model 115 out of 160 with `Kernel Type` = Triangular and `h` = 4.371794871794871.

Finished processing model 115 with `R2 = 0.5616549283665744.

Processing model 116 out of 160 with `Kernel Type` = Triangular and `h` = 4.4974358974358974.

Finished processing model 116 with `R2 = 0.5490272913912144.

Processing model 117 out of 160 with `Kernel Type` = Triangular and `h` = 4.623076923076923.

Finished processing model 117 with `R2 = 0.5365694897678108.

Processing model 118 out of 160 with `Kernel Type` = Triangular and `h` = 4.748717948717948.

Finished processing model 118 with `R2 = 0.5243246688200633.

Processing model 119 out of 160 with `Kernel Type` = Triangular and `h` = 4.874358974358974.

Finished processing model 119 with `R2 = 0.5123155034599327.

Processing model 120 out of 160 with `Kernel Type` = Triangular and `h` = 5.0.

Finished processing model 120 with `R2 = 0.5005798908202483.

Processing model 121 out of 160 with `Kernel Type` = Uniform and `h` = 0.1.

Finished processing model 121 with `R2 = -6.0143334549242375.

Processing model 122 out of 160 with `Kernel Type` = Uniform and `h` = 0.22564102564102564.

Finished processing model 122 with `R2 = -5.988603929708586.

Processing model 123 out of 160 with `Kernel Type` = Uniform and `h` = 0.35128205128205126.

Finished processing model 123 with `R2 = -5.917214546093458.

Processing model 124 out of 160 with `Kernel Type` = Uniform and `h` = 0.4769230769230769.

Finished processing model 124 with `R2 = -5.819566565165473.

Processing model 125 out of 160 with `Kernel Type` = Uniform and `h` = 0.6025641025641025.

Finished processing model 125 with `R2 = -5.37518814199993.

Processing model 126 out of 160 with `Kernel Type` = Uniform and `h` = 0.7282051282051282.

Finished processing model 126 with `R2 = -5.114740215805383.

Processing model 127 out of 160 with `Kernel Type` = Uniform and `h` = 0.8538461538461538.

Finished processing model 127 with `R2 = -4.526163285212605.

Processing model 128 out of 160 with `Kernel Type` = Uniform and `h` = 0.9794871794871794.

Finished processing model 128 with `R2 = -3.8999710842788735.

Processing model 129 out of 160 with `Kernel Type` = Uniform and `h` = 1.1051282051282052.

Finished processing model 129 with `R2 = -3.246021911259321.

Processing model 130 out of 160 with `Kernel Type` = Uniform and `h` = 1.2307692307692308.

Finished processing model 130 with `R2 = -2.723340207837504.

Processing model 131 out of 160 with `Kernel Type` = Uniform and `h` = 1.3564102564102565.

Finished processing model 131 with `R2 = -2.142064724232441.

Processing model 132 out of 160 with `Kernel Type` = Uniform and `h` = 1.4820512820512821.

Finished processing model 132 with `R2 = -1.3580990734804552.

Processing model 133 out of 160 with `Kernel Type` = Uniform and `h` = 1.6076923076923078.

Finished processing model 133 with `R2 = -1.0305915998232331.

Processing model 134 out of 160 with `Kernel Type` = Uniform and `h` = 1.7333333333333334.

Finished processing model 134 with `R2 = -0.7589711329801199.

Processing model 135 out of 160 with `Kernel Type` = Uniform and `h` = 1.858974358974359.

Finished processing model 135 with `R2 = -0.3609875860459275.

Processing model 136 out of 160 with `Kernel Type` = Uniform and `h` = 1.9846153846153847.

Finished processing model 136 with `R2 = -0.14222994306607695.

Processing model 137 out of 160 with `Kernel Type` = Uniform and `h` = 2.1102564102564103.

Finished processing model 137 with `R2 = 0.030291008246415396.

Processing model 138 out of 160 with `Kernel Type` = Uniform and `h` = 2.235897435897436.

Finished processing model 138 with `R2 = 0.2325617621229631.

Processing model 139 out of 160 with `Kernel Type` = Uniform and `h` = 2.3615384615384616.

Finished processing model 139 with `R2 = 0.3134164718674928.

Processing model 140 out of 160 with `Kernel Type` = Uniform and `h` = 2.4871794871794872.

Finished processing model 140 with `R2 = 0.4651234172687694.

Processing model 141 out of 160 with `Kernel Type` = Uniform and `h` = 2.612820512820513.

Finished processing model 141 with `R2 = 0.49414730621444447.

Processing model 142 out of 160 with `Kernel Type` = Uniform and `h` = 2.7384615384615385.

Finished processing model 142 with `R2 = 0.5077244294046401.

Processing model 143 out of 160 with `Kernel Type` = Uniform and `h` = 2.864102564102564.

Finished processing model 143 with `R2 = 0.5374492229871592.

Processing model 144 out of 160 with `Kernel Type` = Uniform and `h` = 2.98974358974359.

Finished processing model 144 with `R2 = 0.5822884964527504.

Processing model 145 out of 160 with `Kernel Type` = Uniform and `h` = 3.1153846153846154.

Finished processing model 145 with `R2 = 0.5925737977743148.

Processing model 146 out of 160 with `Kernel Type` = Uniform and `h` = 3.241025641025641.

Finished processing model 146 with `R2 = 0.5998260987425498.

Processing model 147 out of 160 with `Kernel Type` = Uniform and `h` = 3.3666666666666667.

Finished processing model 147 with `R2 = 0.6583541636039493.

Processing model 148 out of 160 with `Kernel Type` = Uniform and `h` = 3.4923076923076923.

Finished processing model 148 with `R2 = 0.6711108392834786.

Processing model 149 out of 160 with `Kernel Type` = Uniform and `h` = 3.617948717948718.

Finished processing model 149 with `R2 = 0.6637873679595536.

Processing model 150 out of 160 with `Kernel Type` = Uniform and `h` = 3.7435897435897436.

Finished processing model 150 with `R2 = 0.6920033795227634.

Processing model 151 out of 160 with `Kernel Type` = Uniform and `h` = 3.8692307692307693.

Finished processing model 151 with `R2 = 0.6767811372984631.

Processing model 152 out of 160 with `Kernel Type` = Uniform and `h` = 3.994871794871795.

Finished processing model 152 with `R2 = 0.6799493836780217.

Processing model 153 out of 160 with `Kernel Type` = Uniform and `h` = 4.12051282051282.

Finished processing model 153 with `R2 = 0.6763381418744615.

Processing model 154 out of 160 with `Kernel Type` = Uniform and `h` = 4.246153846153845.

Finished processing model 154 with `R2 = 0.6736419981648771.

Processing model 155 out of 160 with `Kernel Type` = Uniform and `h` = 4.371794871794871.

Finished processing model 155 with `R2 = 0.6636367477342335.

Processing model 156 out of 160 with `Kernel Type` = Uniform and `h` = 4.4974358974358974.

Finished processing model 156 with `R2 = 0.665475504089279.

Processing model 157 out of 160 with `Kernel Type` = Uniform and `h` = 4.623076923076923.

Finished processing model 157 with `R2 = 0.6889690301795104.

Processing model 158 out of 160 with `Kernel Type` = Uniform and `h` = 4.748717948717948.

Finished processing model 158 with `R2 = 0.6725476270461084.

Processing model 159 out of 160 with `Kernel Type` = Uniform and `h` = 4.874358974358974.

Finished processing model 159 with `R2 = 0.6637628825609654.

Processing model 160 out of 160 with `Kernel Type` = Uniform and `h` = 5.0.

Finished processing model 160 with `R2 = 0.663488209942994.

# Display Sorted Results (Descending)

# Pandas allows sorting data by any column using the `sort_values()` method

# The `head()` allows us to see only the the first values

dfModelScore.sort_values(by = ['R2'], ascending = False).head(10)

| Kernel Type | h | R2 | |

|---|---|---|---|

| 43 | Gaussian | 0.476923 | 0.879523 |

| 44 | Gaussian | 0.602564 | 0.861091 |

| 42 | Gaussian | 0.351282 | 0.846588 |

| 45 | Gaussian | 0.728205 | 0.831809 |

| 46 | Gaussian | 0.853846 | 0.797958 |

| 18 | Cosine | 2.361538 | 0.789725 |

| 19 | Cosine | 2.487179 | 0.785513 |

| 20 | Cosine | 2.612821 | 0.772574 |

| 21 | Cosine | 2.738462 | 0.769903 |

| 47 | Gaussian | 0.979487 | 0.763546 |

# Plotting the Train Data F1 as a Heat Map

# We can pivot the data set created to have a 2D matrix of the score as a function of parameters.

hF, hA = plt.subplots(figsize = (9, 9))

# hA = sns.heatmap(data = dfModelScore.pivot(index = 'h', columns = 'Kernel Type', values = 'R2'), robust = True, linewidths = 1, annot = True, fmt = '0.2f', norm = LogNorm(), ax = hA)

hA = sns.heatmap(data = dfModelScore.pivot(index = 'h', columns = 'Kernel Type', values = 'R2'), robust = True, linewidths = 1, annot = True, fmt = '0.2f', cmap = 'viridis', ax = hA)

hA.set_title('R2 of the Cross Validation')

plt.show()

(?) What’s the actual model in production for Kernel Regression?

(#) In production we’d extract the best hyper parameters and then train again on the whole data.

(#) Usually, for best hyper parameters, it is better to use cross validation with low number of folds.

Using Leave One Out is better for estimating real world performance. The logic is that the best hyper parameters should be selected when they are tested with low correlation of the data.

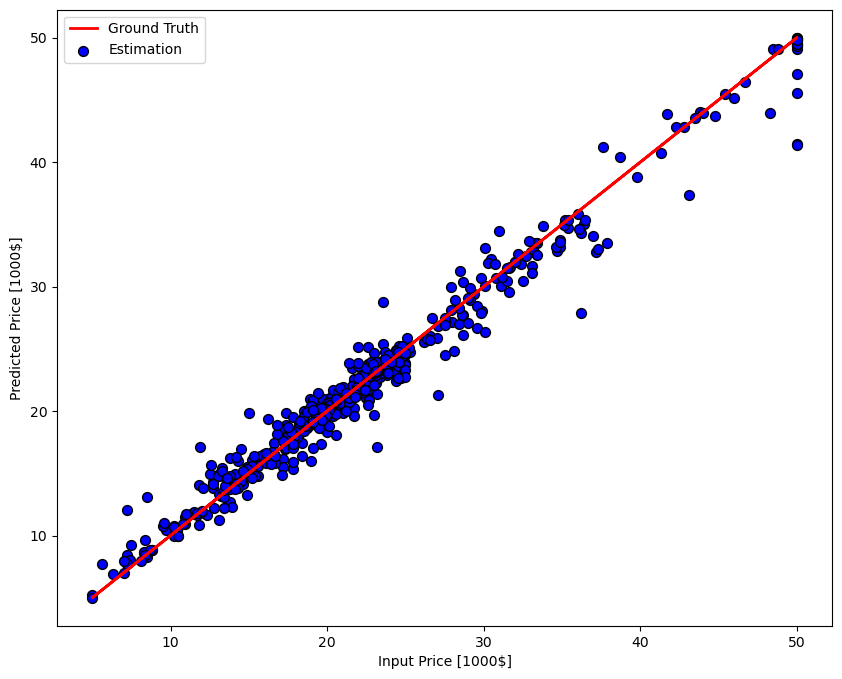

Regression Results#

Results of the best model.

# Optimal Model

# Extract best model Hyper Parameters

bestModelIdx = dfModelScore['R2'].idxmax()

kernelType = dfModelScore.loc[bestModelIdx, 'Kernel Type']

paramH = dfModelScore.loc[bestModelIdx, 'h']

# Construct & Train best model

oKerReg = KernelRegressor(kernelType = kernelType, paramH = paramH)

oKerReg = oKerReg.fit(mX, vY)

# Plot Regression Results

hF, hA = plt.subplots(figsize = (10, 8))

hA = PlotRegressionResults(vY, oKerReg.predict(mX), hA = hA)

hA.set_xlabel('Input Price [1000$]')

hA.set_ylabel('Predicted Price [1000$]')

Text(0, 0.5, 'Predicted Price [1000$]')

Vectorized Cross Validation for Kernel Regression#

One way to optimize the process is by pre calculating the distance matrix for the whole data.

Then using a sub set of it according to the subset for training.

For instance, let’s recreate the Leave One Out:

Calculate the Distance Matrix \(\boldsymbol{D}_{x} \in \mathbb{R}^{N \times N}\) such that \( \boldsymbol{D}_{x} \left[ i, j \right] = \left\| \boldsymbol{x}_{i}-\boldsymbol{x}_{j} \right\| _{2}\).

Calculate the weights matrix \(\boldsymbol{W} \in \mathbb{R}^{N \times N}\) such that \(\boldsymbol{W} \left[ i, j \right] = k \left( \frac{1}{h} \boldsymbol{D}_{x} \left[ i, j \right] \right)\).

Estimate \(\boldsymbol{x}_{i}\) without using \(\boldsymbol{x}_{i}\) we set \(\boldsymbol{W} \left[ i, i \right] = 0\).

Apply kernel regression \(\hat{\boldsymbol{y}} = \left( \boldsymbol{W} \boldsymbol{y} \right) \oslash \left( \boldsymbol{W} \boldsymbol{1} \right)\). Where \(\oslash\) is element wise division.

# Vectorized Leave One Out Kernel Regression

numSamples = len(vY) #<! Number of Samples

hK = GaussianKernel #<! Kernel

paramH = 0.3 #<! Bandwidth

mD = sp.spatial.distance.cdist(mX, mX, metric = 'euclidean') #<! Distance Matrix

mW = hK(mD / paramH) #<! Weights matrix

# Zeroing the diagonal to prevent the weight of the sample

mW[range(numSamples), range(numSamples)] = 0 #<! Leave One Out

vYPred = (mW @ vY) / np.sum(mW, axis = 1) #<! Kernel Regression

print(f'The Leave One Out R2 Score for Kernel Type = {hK} and Bandwidth = {paramH} is {r2_score(vY, vYPred) }.')

The Leave One Out R2 Score for Kernel Type = <function GaussianKernel at 0x7fa46e8e8220> and Bandwidth = 0.3 is 0.8861968191595648.

(@) Plot the regression of the best model (See previous notebooks).