Polynomial Fit#

Notebook by:

Royi Avital RoyiAvital@fixelalgorithms.com

Revision History#

Version |

Date |

User |

Content / Changes |

|---|---|---|---|

1.0.000 |

23/03/2024 |

Royi Avital |

First version |

# Import Packages

# General Tools

import numpy as np

import scipy as sp

import pandas as pd

# Machine Learning

# Miscellaneous

import math

import os

from platform import python_version

import random

import timeit

# Typing

from typing import Callable, Dict, List, Optional, Set, Tuple, Union

# Visualization

import matplotlib as mpl

import matplotlib.pyplot as plt

import seaborn as sns

# Jupyter

from IPython import get_ipython

from IPython.display import Image

from IPython.display import display

from ipywidgets import Dropdown, FloatSlider, interact, IntSlider, Layout, SelectionSlider

from ipywidgets import interact

Notations#

(?) Question to answer interactively.

(!) Simple task to add code for the notebook.

(@) Optional / Extra self practice.

(#) Note / Useful resource / Food for thought.

Code Notations:

someVar = 2; #<! Notation for a variable

vVector = np.random.rand(4) #<! Notation for 1D array

mMatrix = np.random.rand(4, 3) #<! Notation for 2D array

tTensor = np.random.rand(4, 3, 2, 3) #<! Notation for nD array (Tensor)

tuTuple = (1, 2, 3) #<! Notation for a tuple

lList = [1, 2, 3] #<! Notation for a list

dDict = {1: 3, 2: 2, 3: 1} #<! Notation for a dictionary

oObj = MyClass() #<! Notation for an object

dfData = pd.DataFrame() #<! Notation for a data frame

dsData = pd.Series() #<! Notation for a series

hObj = plt.Axes() #<! Notation for an object / handler / function handler

Code Exercise#

Single line fill

vallToFill = ???

Multi Line to Fill (At least one)

# You need to start writing

????

Section to Fill

#===========================Fill This===========================#

# 1. Explanation about what to do.

# !! Remarks to follow / take under consideration.

mX = ???

???

#===============================================================#

# Configuration

# %matplotlib inline

seedNum = 512

np.random.seed(seedNum)

random.seed(seedNum)

# Matplotlib default color palette

lMatPltLibclr = ['#1f77b4', '#ff7f0e', '#2ca02c', '#d62728', '#9467bd', '#8c564b', '#e377c2', '#7f7f7f', '#bcbd22', '#17becf']

# sns.set_theme() #>! Apply SeaBorn theme

runInGoogleColab = 'google.colab' in str(get_ipython())

# Constants

FIG_SIZE_DEF = (8, 8)

ELM_SIZE_DEF = 50

CLASS_COLOR = ('b', 'r')

EDGE_COLOR = 'k'

MARKER_SIZE_DEF = 10

LINE_WIDTH_DEF = 2

# Courses Packages

import sys

sys.path.append('../')

sys.path.append('../../')

sys.path.append('../../../')

from utils.DataVisualization import PlotRegressionData

# General Auxiliary Functions

def PlotPolyFit( vX: np.ndarray, vY: np.ndarray, vP: Optional[np.ndarray] = None, P: int = 1, numGridPts: int = 1001,

hA: Optional[plt.Axes] = None, figSize: Tuple[int, int] = FIG_SIZE_DEF, markerSize: int = MARKER_SIZE_DEF,

lineWidth: int = LINE_WIDTH_DEF, axisTitle: str = None ) -> None:

if hA is None:

hF, hA = plt.subplots(1, 2, figsize = figSize)

else:

hF = hA[0].get_figure()

numSamples = len(vY)

# Polyfit

vW = np.polyfit(vX, vY, P)

# MSE

vHatY = np.polyval(vW, vX)

MSE = (np.linalg.norm(vY - vHatY) ** 2) / numSamples

# Plot

xx = np.linspace(np.floor(np.min(vX)), np.ceil(np.max(vX)), numGridPts)

yy = np.polyval(vW, xx)

hA[0].plot(vX, vY, '.r', ms = 10, label = '$y_i$')

hA[0].plot(xx, yy, 'b', lw = 2, label = '$\hat{f}(x)$')

hA[0].set_title (f'$P = {P}$\nMSE = {MSE}')

hA[0].set_xlabel('$x$')

# hA[0].axis(lAxis)

hA[0].grid()

hA[0].legend()

hA[1].stem(vW[::-1], label = 'Estimated')

if vP is not None:

hA[1].stem(vP[::-1], linefmt = 'C1:', markerfmt = 'D', label = 'Ground Truth')

numTicks = len(vW) if vP is None else max(len(vW), len(vP))

hA[1].set_xticks(range(numTicks))

hA[1].set_title('Coefficients')

hA[1].set_xlabel('$w$')

hA[1].legend()

# return hA

Polynomial Fit#

Polynomial Fit is about optimizing the parameters of a polynomial model to explain the given data.

There 2 main polynomials in this context:

The model parameters are liner with the data which means the solution is given by a Linear System.

Yet, in practice, due to noise or model incompatibility, the system can not be solved as it is.

Then a minimization is done using different objectives, among them the most popular are:

Least Squares (Induced by the \({L}^{2}\) Norm).

Least Deviation (Induced by the \({L}^{1}\) Norm).

Using the the Least Squares model generates a Linear Least Squares problem.

(#) There are many other objectives in the field of regression in general and linear fit specifically.

# Parameters

# Data Generation

numSamples = 30

noiseStd = 0.3

vP = np.array([0.5, 2, 5])

polynomDeg = 2

# Data Visualization

gridNoiseStd = 0.05

numGridPts = 250

Generate / Load Data#

In the following we’ll generate data according to the following model:

Where

# The Data Generating Function

def f( vX: np.ndarray, vP: np.ndarray ) -> np.ndarray:

# return 0.25 * (vX ** 2) + 2 * vX + 5

return np.polyval(vP, vX)

hF = lambda vX: f(vX, vP)

# Generate Data

vX = np.linspace(-2, 2, numSamples, endpoint = True) + (gridNoiseStd * np.random.randn(numSamples))

vN = noiseStd * np.random.randn(numSamples)

vY = hF(vX) + vN

print(f'The features data shape: {vX.shape}')

print(f'The labels data shape: {vY.shape}')

The features data shape: (30,)

The labels data shape: (30,)

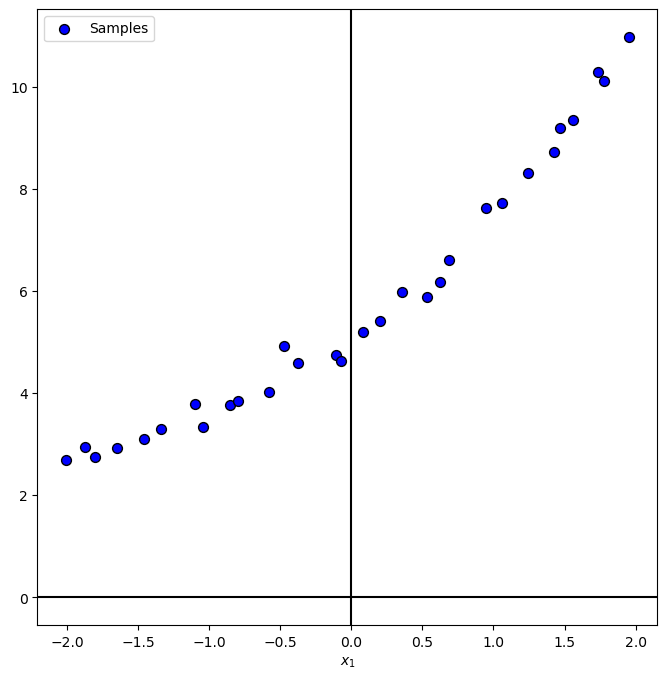

Plot Data#

# Plot the Data

PlotRegressionData(vX, vY)

plt.show()

(?) What would be the equivalent of class balance for regression? Think on the span and density of the values.

Train Polyfit Regressor#

The PolyFit optimization problem is given by:

Where

This is a polyfit with hyper parameter \(p\).

The optimal weights are calculated by linear system solvers.

Yet it is better to use solvers optimized for this task, such as:

NumPy:

polyfit.SciKit Learn:

LinearRegressioncombined withPolynomialFeatures.

In this notebook we’ll use the NumPy’s implementation.

(#) For arbitrary \(\Phi\) the above becomes a linear regression problem.

polynomDeg

2

# Polynomial Fit

# The order of Polynomial p(x) = w[0] * x**deg + ... + w[deg].

# Hence we need to show it reversed:

vW = np.polyfit(vX, vY, polynomDeg)

for ii in range(polynomDeg + 1):

print(f'The coefficient of degree {ii}: {vW[-1 - ii]:0.3f}')

The coefficient of degree 0: 5.082

The coefficient of degree 1: 2.032

The coefficient of degree 2: 0.467

Effect of the Polynomial Degree \(p\)#

The degree of the polynomial is basically the DoF of the model.

Tuning it is the way (Along with Regularization) to avoid underfit and overfit.

# The Polynomial Degree Parameter

hPolyFit = lambda P: PlotPolyFit(vX, vY, vP = vP, P = P)

pSlider = IntSlider(min = 0, max = 31, step = 1, value = 0, layout = Layout(width = '30%'))

interact(hPolyFit, P = pSlider)

plt.show()

(?) What happens when the degree of the polynomial is higher than the number of samples?

(?) What would be the optimal \(\boldsymbol{w}\) in case the model matrix is given by \(\boldsymbol{\Phi} = \begin{bmatrix} 5 & 2 x_{1} & \frac{1}{2} x_{1}^{2} \\ 5 & 2 x_{2} & \frac{1}{2} x_{2}^{2} & \\ \vdots & \vdots & \vdots \\ 5 & 2 x_{N} & \frac{1}{2} x_{N}^{2} \end{bmatrix}\)?

(#) The properties of the model matrix are important. As we basically after the best approximation of the data in the space its columns spans. For instance, for Polynomial Fit, we ca use better basis for polynomials.

Sensitivity to Support#

We’ll show the effect of the support, given a number of sample on the estimated weights (Coefficients).

# Data Generation

vN = 20 * noiseStd * np.random.randn(numSamples)

def GenDataByRadius( vP, P, vN, valR: float = 1.0 ):

vX = np.linspace(-valR, valR, np.size(vN), endpoint = True)

vY = f(vX, vP) + vN

PlotPolyFit(vX, vY, vP = vP, P = P)

# Interactive Visualization

hGenDataByRadius = lambda valR: GenDataByRadius(vP, polynomDeg, vN, valR)

rSlider = FloatSlider(min = 0.1, max = 50.0, step = 0.1, value = 0.1, layout = Layout(width = '30%'))

interact(hGenDataByRadius, valR = rSlider)

plt.show()

# Samples and Coefficients MSE as a Function of Support

# Doing the above manually.

def GenDataRadius( vP, vN, valR: float = 1.0 ):

vX = np.linspace(-valR, valR, np.size(vN), endpoint = True)

vY = f(vX, vP) + vN

return vX, vY

vR = np.linspace(0.1, 50, 20)

for valR in vR:

vX, vY = GenDataRadius(vP, 0.5 * vN, valR)

vW = np.polyfit(vX, vY, polynomDeg)

vYPred = np.polyval(vW, vX)

valMSESamples = np.mean(np.square(vYPred - vY))

valMSECoeff = np.mean(np.square(vW - vP))

print(f'The Support Radius : {valR}.')

print(f'The Samples MSE : {valMSESamples}.')

print(f'The Coefficients MSE: {valMSECoeff}.')

print(f'The Estimated Coef : {vW}')

The Support Radius : 0.1.

The Samples MSE : 8.515811666921199.

The Coefficients MSE: 15437.102809687623.

The Estimated Coef : [215.69605007 0.6031023 5.13092767]

The Support Radius : 2.7263157894736842.

The Samples MSE : 8.515811666921197.

The Coefficients MSE: 0.03453016283032978.

The Estimated Coef : [0.78952227 1.94876244 5.13092767]

The Support Radius : 5.352631578947368.

The Samples MSE : 8.515811666921199.

The Coefficients MSE: 0.007821562980785608.

The Estimated Coef : [0.57511032 1.9739026 5.13092767]

The Support Radius : 7.978947368421052.

The Samples MSE : 8.515811666921197.

The Coefficients MSE: 0.006197046393521294.

The Estimated Coef : [0.53380205 1.98249271 5.13092767]

The Support Radius : 10.605263157894736.

The Samples MSE : 8.5158116669212.

The Coefficients MSE: 0.0058938784857100685.

The Estimated Coef : [0.51913337 1.98682826 5.13092767]

The Support Radius : 13.23157894736842.

The Samples MSE : 8.515811666921206.

The Coefficients MSE: 0.005801532268737857.

The Estimated Coef : [0.51229167 1.9894427 5.13092767]

The Support Radius : 15.857894736842104.

The Samples MSE : 8.515811666921188.

The Coefficients MSE: 0.005764293450267161.

The Estimated Coef : [0.50855743 1.99119115 5.13092767]

The Support Radius : 18.484210526315792.

The Samples MSE : 8.515811666921186.

The Coefficients MSE: 0.00574627908399256.

The Estimated Coef : [0.50629843 1.99244275 5.13092767]

The Support Radius : 21.110526315789475.

The Samples MSE : 8.515811666921197.

The Coefficients MSE: 0.005736385852137046.

The Estimated Coef : [0.50482877 1.99338293 5.13092767]

The Support Radius : 23.736842105263158.

The Samples MSE : 8.515811666921193.

The Coefficients MSE: 0.005730424939712834.

The Estimated Coef : [0.50381934 1.99411507 5.13092767]

The Support Radius : 26.363157894736844.

The Samples MSE : 8.515811666921179.

The Coefficients MSE: 0.00572657261787411.

The Estimated Coef : [0.50309627 1.99470133 5.13092767]

The Support Radius : 28.989473684210527.

The Samples MSE : 8.51581166692119.

The Coefficients MSE: 0.005723943765630234.

The Estimated Coef : [0.50256067 1.99518136 5.13092767]

The Support Radius : 31.61578947368421.

The Samples MSE : 8.515811666921259.

The Coefficients MSE: 0.005722070627738776.

The Estimated Coef : [0.50215291 1.99558165 5.13092767]

The Support Radius : 34.242105263157896.

The Samples MSE : 8.51581166692122.

The Coefficients MSE: 0.005720688508876608.

The Estimated Coef : [0.50183533 1.99592053 5.13092767]

The Support Radius : 36.86842105263158.

The Samples MSE : 8.515811666921207.

The Coefficients MSE: 0.005719638985450664.

The Estimated Coef : [0.50158316 1.99621113 5.13092767]

The Support Radius : 39.49473684210526.

The Samples MSE : 8.515811666921252.

The Coefficients MSE: 0.005718822709033802.

The Estimated Coef : [0.50137961 1.99646308 5.13092767]

The Support Radius : 42.12105263157895.

The Samples MSE : 8.515811666921207.

The Coefficients MSE: 0.005718174876141146.

The Estimated Coef : [0.50121293 1.99668361 5.13092767]

The Support Radius : 44.747368421052634.

The Samples MSE : 8.515811666921078.

The Coefficients MSE: 0.005717651772653998.

The Estimated Coef : [0.50107473 1.99687826 5.13092767]

The Support Radius : 47.373684210526314.

The Samples MSE : 8.515811666921092.

The Coefficients MSE: 0.005717223044907748.

The Estimated Coef : [0.50095887 1.99705132 5.13092767]

The Support Radius : 50.0.

The Samples MSE : 8.515811666921131.

The Coefficients MSE: 0.0057168670795355965.

The Estimated Coef : [0.50086078 1.9972062 5.13092767]